- Paper (PDF)

- Project page (paper, video, etc.)

- Paper (PDF)

- Project page (paper, video, etc.)

- Paper (PDF)

- Project page (paper, video, etc.)

![[Zheng and James 2012] Energy-based Self-Collision Culling for Arbitrary

Mesh Deformations](pics/thumb_12ESCC.jpg)

- Paper (PDF)

- Project page

(paper, video, etc.)

![[Chadwick et al. 2012] Precomputed Acceleration Noise for Improved

Rigid-Body Sound](pics/thumb_12PAN.jpg)

- Paper (PDF)

- Project page (paper, video, etc.)

![[An et al. 2012] Motion-driven Concatenative Synthesis of Cloth

Sounds](pics/thumb_12ClothSound.png)

- Paper (PDF)

- Project page

(paper, video, data, etc.)

![[Yuksel et al. 2012] Stitch Meshes for Modeling Knitted Clothing with

Yarn-level Detail](pics/thumb_12StitchMesh.jpg)

- Paper (PDF)

- Project page (paper, video, etc.)

- YouTube video

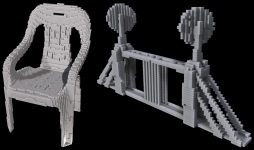

![[Beacher et al. 2012] Fabricating Articulated Characters from Skinned

Meshes](pics/thumb_12FabArt.jpg)

- Paper (PDF)

- Project page (paper, video, etc.)

- Video (Quicktime

(hi-res), YouTube,

Vimeo)

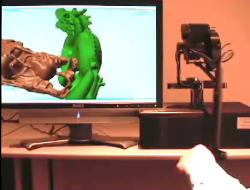

![[Chadwick and

James 2011] Animating Fire with Sound](pics/thumb_fireDragon.jpg)

- Paper (PDF)

- Project

page (paper, video, source code, etc.)

![From [Zheng and James 2011] Toward High-Quality Modal Contact Sound](pics/thumb_MC2011.jpg)

- Paper (PDF)

- Project page

(paper, video, etc.)

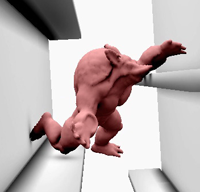

Theodore Kim and Doug L. James, Physics-based Character Skinning using Multi-Domain Subspace Deformations, In ACM SIGGRAPH / Eurographics Symposium on Computer Animation, August 2011. (Best paper award)

ABSTRACT: We propose a domain-decomposition method to simulate articulated deformable characters entirely within a subspace framework. The method supports quasistatic and dynamic deformations, nonlinear kinematics and materials, and can achieve interactive time-stepping rates. To avoid artificial rigidity, or "locking," associated with coupling low-rank domain models together with hard constraints, we employ penalty-based coupling forces. The multi-domain subspace integrator can simulate deformations efficiently, and exploits efficient subspace-only evaluation of constraint forces between rotated domains using the so-called Fast Sandwich Transform (FST). Examples are presented for articulated characters with quasistatic and dynamic deformations, and interactive performance with hundreds of fully coupled modes. Using our method, we have observed speedups of between three and four orders of magnitude over full-rank, unreduced simulations.

- Paper (PDF)

- Project

page (paper, video, etc.)

- Video

(YouTube)

- Paper (PDF)

- Video

(MP4)

- Project page

- Paper (PDF)

- Video

- Project page

Jeffrey

Chadwick, Steven An,

and Doug L. James, Harmonic Shells: A Practical

Nonlinear Sound Model for Near-Rigid Thin Shells, ACM

Transactions on Graphics (SIGGRAPH ASIA Conference

Proceedings), 28(5), December 2009, pp.

119:1-119:10.

ABSTRACT: We propose a procedural method for synthesizing realistic sounds due to nonlinear thin-shell vibrations. We use linear modal analysis to generate a small-deformation displacement basis, then couple the modes together using nonlinear thin-shell forces. To enable audio-rate time-stepping of mode amplitudes with mesh-independent cost, we propose a reduced-order dynamics model based on a thin-shell cubature scheme. Limitations such as mode locking and pitch glide are addressed. To support fast evaluation of mid-frequency mode-based sound radiation for detailed meshes, we propose far-field acoustic transfer maps (FFAT maps) which can be precomputed using state-of-the-art fast Helmholtz multipole methods. Familiar examples are presented including rumbling trash cans and plastic bottles, crashing cymbals, and noisy sheet metal objects, each with increased richness over linear modal sound models.

- Paper (PDF)

- Project page

YouTube Video

YouTube Video

Theodore Kim and Doug L. James, Skipping Steps in Deformable Simulation with Online Model Reduction, ACM Transactions on Graphics (SIGGRAPH ASIA Conference Proceedings), 28(5), December 2009, pp. 123:1-123:9.

ABSTRACT: Finite element simulations of nonlinear deformable models are computationally costly, routinely taking hours or days to compute the motion of detailed meshes. Dimensional model reduction can make simulations orders of magnitude faster, but is unsuitable for general deformable body simulations because it requires expensive precomputations, and it can suppress motion that lies outside the span of a pre-specified low-rank basis. We present an online model reduction method that does not have these limitations. In lieu of precomputation, we analyze the motion of the full model as the simulation progresses, incrementally building a reduced-order nonlinear model, and detecting when our reduced model is capable of performing the next timestep. For these subspace steps, full-model computation is “skipped” and replaced with a very fast (on the order of milliseconds) reduced order step. We present algorithms for both dynamic and quasistatic simulations, and a “throttle” parameter that allows a user to trade off between faster, approximate previews and slower, more conservative results. For detailed meshes undergoing low-rank motion, we have observed speedups of over an order of magnitude with our method.

![Pouring water with thousands of acoustic

bubbles [Zheng and James 2009]](http://www.cs.cornell.edu/%7Edjames/research/pics/thumb_harmonicFluids.jpg)

Changxi

Zheng and Doug L. James, Harmonic Fluids, ACM Transaction on Graphics (SIGGRAPH 2009),

28(3), August 2009, pp. 37:1-37:12.

ABSTRACT: Fluid sounds, such as splashing and pouring, are ubiquitous and familiar but we lack physically based algorithms to synthesize them in computer animation or interactive virtual environments. We propose a practical method for automatic procedural synthesis of synchronized harmonic bubble-based sounds from 3D fluid animations. To avoid audio-rate time-stepping of compressible fluids, we acoustically augment existing incompressible fluid solvers with particle-based models for bubble creation, vibration, advection, and radiation. Sound radiation from harmonic fluid vibrations is modeled using a time-varying linear superposition of bubble oscillators. We weight each oscillator by its bubble-to-ear acoustic transfer function, which is modeled as a discrete Green's function of the Helmholtz equation. To solve potentially millions of 3D Helmholtz problems, we propose a fast dual-domain multipole boundary-integral solver, with cost linear in the complexity of the fluid domain's boundary. Enhancements are proposed for robust evaluation, noise elimination, acceleration, and parallelization. Examples of harmonic fluid sounds are provided for water drops, pouring, babbling, and splashing phenomena, often with thousands of acoustic bubbles, and hundreds of thousands of transfer function solves.

- Paper (PDF)

- Project page (with full video results, etc)

SIGGRAPH

CAF Video

SIGGRAPH

CAF Video

Steven An, Theodore Kim and Doug L. James, Optimizing Cubature for Efficient Integration of Subspace Deformations, ACM Transactions on Graphics (SIGGRAPH ASIA Conference Proceedings), 27(5), December 2008, pp. 165:1-165:10.

ABSTRACT: We propose an efficient scheme for evaluating nonlinear subspace forces (and Jacobians) associated with subspace deformations. The core problem we address is efficient integration of the subspace force density over the 3D spatial domain. Similar to Gaussian quadrature schemes that efficiently integrate functions that lie in particular polynomial subspaces, we propose cubature schemes (multi-dimensional quadrature) optimized for efficient integration of force densities associated with particular subspace deformations, particular materials, and particular geometric domains. We support generic subspace deformation kinematics, and nonlinear hyperelastic materials. For an r-dimensional deformation subspace with O(r) cubature points, our method is able to evaluate subspace forces at O(r^2) cost. We also describe composite cubature rules for runtime error estimation. Results are provided for various subspace deformation models, several hyperelastic materials (St.Venant-Kirchhoff, Mooney-Rivlin, Arruda-Boyce), and multimodal (graphics, haptics, sound) applications. We show dramatically better efficiency than traditional Monte Carlo integration.

- Paper (PDF)

- Video (mov)

- Project page

- Source Code (cubica)

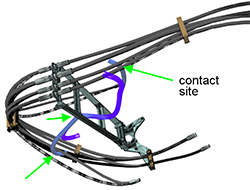

Danny M. Kaufman, Shinjiro Sueda, Doug L. James and Dinesh K. Pai, Staggered Projections for Frictional Contact in Multibody Systems, ACM Transactions on Graphics (SIGGRAPH ASIA Conference Proceedings), 27(5), December 2008, pp. 164:1-164:11.

ABSTRACT: We present a new discrete, velocity-level formulation of frictional contact dynamics that reduces to a pair of coupled projections, and introduce a simple fixed-point property of the projections. This allows us to construct a novel algorithm for accurate frictional contact resolution based on a simple staggered sequence of projections. The algorithm accelerates performance using warm starts to leverage the potentially high temporal coherence between contact states and provides users with direct control over frictional accuracy. Applying this algorithm to rigid and deformable systems, we obtain robust and accurate simulations of frictional contact behavior not previously possible, at rates suitable for interactive haptic simulations, as well as large-scale animations. By construction, the proposed algorithm guarantees exact, velocity-level contact constraint enforcement and obtains long-term stable and robust integration. Examples are given to illustrate the performance, plausibility and accuracy of the obtained solutions.

- Paper (PDF)

- Video (mov)

- Project page

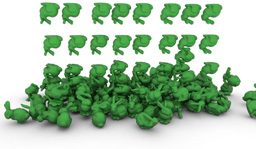

Jonathan Kaldor, Doug L. James and Steve Marschner, Simulating Knitted Cloth at the Yarn Level, ACM Transactions on Graphics (SIGGRAPH Conference Proceedings), 27(3), August 2008, pp. 65:1-65:9.

ABSTRACT: Knitted fabric is widely used in clothing because of its unique and stretchy behavior, which is fundamentally different from the behavior of woven cloth. The properties of knits come from the nonlinear, three-dimensional kinematics of long, inter-looping yarns, and despite significant advances in cloth animation we still do not know how to simulate knitted fabric faithfully. Existing cloth simulators mainly adopt elastic-sheet mechanical models inspired by woven materials, focusing less on the model itself than on important simulation challenges such as efficiency, stability, and robustness. We define a new computational model for knits in terms of the motion of yarns, rather than the motion of a sheet. Each yarn is modeled as an inextensible, yet otherwise flexible, B-spline tube. To simulate complex knitted garments, we propose an implicit-explicit integrator, with yarn inextensibility constraints imposed using efficient projections. Friction among yarns is approximated using rigid-body velocity filters, and key yarn-yarn interactions are mediated by stiff penalty forces. Our results show that this simple model predicts the key mechanical properties of different knits, as demonstrated by qualitative comparisons to observed deformations of actual samples in the laboratory, and that the simulator can scale up to substantial animations with complex dynamic motion.

- Paper (PDF)

- YouTube

Video

- Project page

ABSTRACT: Physically based simulation of rigid body dynamics is commonly done by time-stepping systems forward in time. In this paper, we propose methods to allow time-stepping rigid body systems backward in time. Unfortunately, reverse-time integration of rigid bodies involving frictional contact is mathematically ill-posed, and can lack unique solutions. We instead propose time-reversed rigid body integrators that can sample possible solutions when unique ones do not exist. We also discuss challenges related to dissipation-related energy gain, sensitivity to initial conditions, stacking, constraints and articulation, rolling, sliding, skidding, bouncing, high angular velocities, rapid velocity growth from micro-collisions, and other problems encountered when going against the usual flow of time.

- Paper

(PDF)

- Project

page (with slides)

ABSTRACT: We present a novel wavelet method for the simulation of fluids at high spatial resolution. The algorithm enables large- and small-scale detail to be edited separately, allowing high-resolution detail to be added as a post-processing step. Instead of solving the Navier-Stokes equations over a highly refined mesh, we use the wavelet decomposition of a low-resolution simulation to determine the location and energy characteristics of missing high-frequency components. We then synthesize these missing components using a novel incompressible turbulence function, and provide a method to maintain the temporal coherence of the resulting structures. There is no linear system to solve, so the method parallelizes trivially and requires only a few auxiliary arrays. The method guarantees that the new frequencies will not interfere with existing frequencies, allowing animators to set up a low resolution simulation quickly and later add details without changing the overall fluid motion.

- Paper (PDF)

- Project page

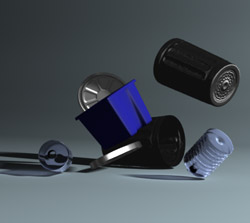

Nicolas Bonneel, George Drettakis, Nicolas Tsingos, Isabelle Viaud-Delmon and Doug L. James, Fast Modal Sounds with Scalable Frequency-Domain Synthesis, ACM Transactions on Graphics (SIGGRAPH Conference Proceedings), 27(3), August 2008, pp. 24:1-24:9.

ABSTRACT: Audio rendering of impact sounds, such as those caused by falling objects or explosion debris, adds realism to interactive 3D audio-visual applications, and can be convincingly achieved using modal sound synthesis. Unfortunately, mode-based computations can become prohibitively expensive when many objects, each with many modes, are impacted simultaneously. We introduce a fast sound synthesis approach, based on short-time Fourier Tranforms, that exploits the inherent sparsity of modal sounds in the frequency domain. For our test scenes, this “fast mode summation” can give speedups of 5-8 times compared to a time-domain solution, with slight degradation in quality. We discuss different reconstruction windows, affecting the quality of impact sound “attacks”. Our Fourier-domain processing method allows us to introduce a scalable, real-time, audio processing pipeline for both recorded and modal sounds, with auditory masking and sound source clustering. To avoid abrupt computation peaks, such as during the simultaneous impacts of an explosion, we use crossmodal perception results on audiovisual synchrony to effect temporal scheduling. We also conducted a pilot perceptual user evaluation of our method. Our implementation results show that we can treat complex audiovisual scenes in real time with high quality.

- Paper (PDF)

- Project page

Jernej Barbič and Doug L. James, Six-DoF haptic rendering of contact between geometrically complex reduced deformable models, IEEE Transactions on Haptics, 1(1):39–52, 2008.

ABSTRACT: Real-time evaluation of distributed contact forces between rigid or deformable 3D objects is a key ingredient of 6-DoF force-feedback rendering. Unfortunately, at very high temporal rates, there is often insufficient time to resolve contact between geometrically complex objects. We propose a spatially and temporally adaptive approach to approximate distributed contact forces under hard real-time constraints. Our method is CPU based, and supports contact between rigid or reduced deformable models with complex geometry. We propose a contact model that uses a point-based representation for one object, and a signed-distance field for the other. This model is related to the Voxmap Pointshell Method (VPS), but gives continuous contact forces and torques, enabling stable rendering of stiff penalty-based distributed contacts. We demonstrate that stable haptic interactions can be achieved by point-sampling offset surfaces to input “polygon soup” geometry using particle repulsion. We introduce a multi-resolution nested pointshell construction which permits level-of-detail contact forces, and enables graceful degradation of contact in close-proximity scenarios. Parametrically deformed distance fields are proposed for contact between reduced deformable objects. We present several examples of 6-DoF haptic rendering of geometrically complex rigid and deformable objects in distributed contact at real-time kilohertz rates.

- Paper (PDF)

- Related materials

ABSTRACT: Real-time evaluation of distributed contact forces for rigid or deformable 3D objects is important for providing multi-sensory feedback in emerging real-time applications, such as 6-DoF haptic force-feedback rendering. Unfortunately, at very high temporal rates (1 kHz for haptics), there is often insufficient time to resolve distributed contact between geometrically complex objects.

In this paper, we present a spatially and temporally adaptive sample-based approach to approximate contact forces under hard real-time constraints. The approach is CPU based, and supports both rigid and reduced deformable models with complex geometry. Penalty-based contact forces are efficiently resolved using a multi-resolution point-based representation for one object, and a signed-distance oracle for the other. Hard real-time approximation of distributed contact forces uses multi-level progressive point-contact sampling, and exploits temporal coherence, graceful degradation and other optimizations. We present several examples of 6-DoF haptic rendering of geometrically complex rigid or deformable objects in distributed contact at real-time kilohertz rates.

- Paper

(pdf, 4MB)

- Project

page (with videos and haptic demo)

!["Spelling

SIGGRAPH" from [Twigg and James 2007]](pics/thumb_MWB.png)

ABSTRACT: Animation techniques for controlling passive simulation are commonly based on an optimization paradigm: the user provides goals a priori, and sophisticated numerical methods minimize a cost function that represents these goals. Unfortunately, for multibody systems with discontinuous contact events these optimization problems can be highly nontrivial to solve, and many-hour offline optimizations, unintuitive parameters, and convergence failures can frustrate end-users and limit usage. On the other hand, users are quite adaptable, and systems which provide interactive feedback via an intuitive interface can leverage the user’s own abilities to quickly produce interesting animations. However, the online computation necessary for interactivity limits scene complexity in practice.

We introduce Many-Worlds Browsing, a method which circumvents these limits by exploiting the speed of multibody simulators to compute numerous example simulations in parallel (offline and online), and allow the user to browse and modify them interactively. We demonstrate intuitive interfaces through which the user can select among the examples and interactively adjust those parts of the scene that don’t match his requirements. We show that using a combination of our techniques, unusual and interesting results can be generated for moderately sized scenes with under an hour of user time. Scalability is demonstrated by sampling much larger scenes using modest offline computations.

- Paper (High-res, 10MB PDF)

- Project page

- Software and demo

ABSTRACT: We introduce a simple technique that enables robust approximation of volumetric, large-deformation dynamics for real-time or large-scale offline simulations. We propose Lattice Shape Matching, an extension of deformable shape matching to regular lattices with embedded geometry; lattice vertices are smoothed by convolution of rigid shape matching operators on local lattice regions, with the effective mechanical stiffness specified by the amount of smoothing via region width. Since the naive method can be very slow for stiff models--per-vertex costs scale cubically with region width--we provide a fast summation algorithm, Fast Lattice Shape Matching (FastLSM), that exploits the inherent summation redundancy of shape matching and can provide large-region matching at constant per-vertex cost. With this approach, large lattices can be simulated in linear time. We present several examples and benchmarks of an efficient CPU implementation, including many dozens of soft bodies simulated at real-time rates on a typical desktop machine.

- Paper (PDF, 5.4MB)

- Project page (with videos, and software demo)

ABSTRACT: We describe a technique for using space-time cuts to smoothly transition between stochastic mesh animation clips involving numerous deformable mesh groups while subject to physical constraints. These transitions are used to construct Mesh Ensemble Motion Graphs for interactive data-driven animation of high-dimensional mesh animation datasets, such as those arising from expensive physical simulations of deformable objects blowing in the wind. We formulate the transition computation as an integer programming problem, and introduce a novel randomized algorithm to compute transitions subject to geometric noninterpenetration constraints.

- PREPRINT (pdf, 18MB; Updated June 2007)

- VIDEO (avi [DivX], 74MB)

- Project page

- MEMG

Motion Database (Gigabytes of compressed

simulation data!)

- SIGGRAPH 2006 Sketch (pdf)

ABSTRACT: Simulating sounds produced by realistic vibrating objects is challenging because sound radiation involves complex diffraction and interreflection effects that are very perceptible and important. These wave phenomena are well understood, but have been largely ignored in computer graphics due to the high cost and complexity of computing them at audio rates. We describe a new algorithm for real-time synthesis of realistic sound radiation from rigid objects. We start by precomputing the linear vibration modes of an object, and then relate each mode to its sound pressure field, or acoustic transfer function, using standard methods from numerical acoustics. Each transfer function is then approximated to a specified accuracy using low-order multipole sources placed near the object. We provide a low-memory, multilevel, randomized algorithm for optimized source placement that is suitable for complex geometries. At runtime, we can simulate new interaction sounds by quickly summing contributions from each modes equivalent multipole sources. We can efficiently simulate global effects such as interreflection and changes in sound due to listener location. The simulation costs can be dynamically traded-off for sound quality. We present several examples of sound generation from physically based animations.

- PAPER (pdf, 6MB)

- VIDEO (mov, 68MB)

- VIDEO (avi [DivX], 67MB)

- Project page (try the demo!)

- Talk

slides (ppt, 33MB)

Falling chairs with sound (avi

[DivX], 26MB)

Falling chairs with sound (avi

[DivX], 26MB)

ABSTRACT: We extend approaches for skinning characters to the general setting of skinning deformable mesh animations. We provide an automatic algorithm for generating progressive skinning approximations, that is particularly efficient for pseudo-articulated motions. Our contributions include the use of nonparametric mean shift clustering of high-dimensional mesh rotation sequences to automatically identify statistically relevant bones, and robust least squares methods to determine bone transformations, bone-vertex influence sets, and vertex weight values. We use a low-rank data reduction model defined in the undeformed mesh configuration to provide progressive convergence with a fixed number of bones. We show that the resulting skinned animations enable efficient hardware rendering, rest pose editing, and deformable collision detection. Finally, we present numerous examples where skins were automatically generated using a single set of parameter values.

- PAPER (pdf, 8MB)

- VIDEO (avi [DivX], 55MB)

- Project page

ABSTRACT: In this paper, we present an approach for fast subspace integration of reduced-coordinate nonlinear deformable models that is suitable for interactive applications in computer graphics and haptics. Our approach exploits dimensional model reduction to build reduced-coordinate deformable models for objects with complex geometry. We exploit the fact that model reduction on large deformation models with linear materials (as commonly used in graphics) result in internal force models that are simply cubic polynomials in reduced coordinates. Coefficients of these polynomials can be precomputed, for efficient runtime evaluation. This allows simulation of nonlinear dynamics using fast implicit Newmark subspace integrators, with subspace integration costs independent of geometric complexity. We present two useful approaches for generating low-dimensional subspace bases: modal derivatives and an interactive sketch. Mass-scaled principal component analysis (mass-PCA) is suggested for dimensionality reduction. Finally, several examples are given from computer animation to illustrate high performance, including force-feedback haptic rendering of a complicated object undergoing large deformations.

- PAPER (pdf, 5MB)

- VIDEO (avi [DivX], 80MB)

- Project page

- CODE

ABSTRACT: We introduce the Bounded Deformation Tree, or BD-Tree, which can perform collision detection with reduced deformable models at costs comparable to collision detection with rigid objects. Reduced deformable models represent complex deformations as linear superpositions of arbitrary displacement fields, and are used in a variety of applications of interactive computer graphics. The BD-Tree is a bounding sphere hierarchy for output-sensitive collision detection with such models. Its bounding spheres can be updated after deformation in any order, and at a cost independent of the geometric complexity of the model; in fact the cost can be as low as one multiplication and addition per tested sphere, and at most linear in the number of reduced deformation coordinates. We show that the BD-Tree is also extremely simple to implement, and performs well in practice for a variety of real-time and complex off-line deformable simulation examples.

- PAPER (pdf, 5MB)

- VIDEO (avi [DivX], 108MB)

- Project page

- Electronic Theatre animation page. (Video links: AVI MOV)

- SIGGRAPH talk slides (zip, WARNING: 247MB! Optimized for 1024x768 resolution.)

- PAPER (pdf, 2MB)

- VIDEO (avi [DivX], 39MB)

- Project page

- CODE