Location Recognition using

Prioritized Feature Matching

| Yunpeng Li | Noah Snavely | Dan Huttenlocher |

NOTICE (1/1/2013):

If you downloaded the Rome16K dataset prior to

1/1/2013,

please see the important note about

redownloading this dataset below.

Abstract

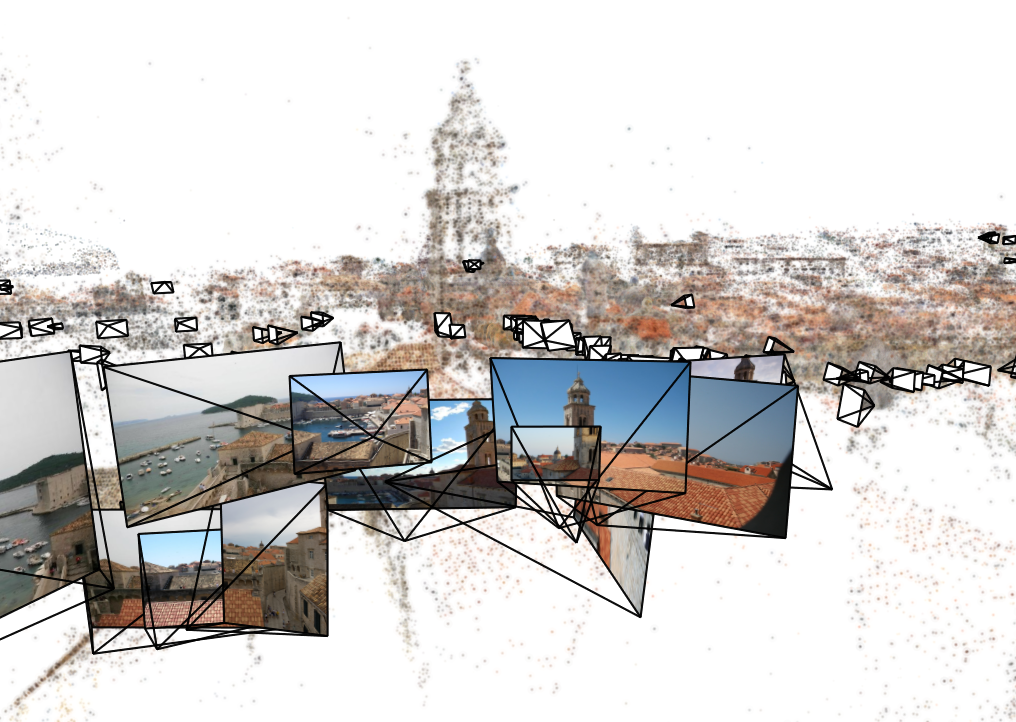

We present a fast, simple location recognition and image localization method that leverages feature correspondence and geometry estimated from large Internet photo collections. Such recovered structure contains a significant amount of useful information about images and image features that is not available when considering images in isolation. For instance, we can predict which views will be the most common, which feature points in a scene are most reliable, and which features in the scene tend to co-occur in the same image. Based on this information, we devise an adaptive, prioritized algorithm for matching a representative set of SIFT features covering a large scene to a query image for efficient localization. Our approach is based on considering features in the scene database, and matching them to query image features, as opposed to more conventional methods that match image features to visual words or database features. We find this approach results in improved performance, due to the richer knowledge of characteristics of the database features compared to query image features. We present experiments on two large city-scale photo collections, showing that our algorithm compares favorably to image retrieval-style approaches to location recognition.

Paper - ECCV 2010

| Paper (PDF, 0.8MB) | ||||

| Supplemental material (PDF, 2.4MB) | ||||

| Spotlight slide (PDF, 0.4MB) | ||||

| Poster (PDF, 26MB) |

Datasets

NOTE:

If you downloaded the Rome16K dataset prior to

1/1/2013, please download it again. In a

previous version of the dataset some of the

database keyfiles were corrupted and

incorrect. This has been fixed in the current

version.

We have released two datasets

described in our paper: Dubrovnik6K and

Rome16K (where the suffix refers to the number

of images in the dataset).

Dubrovnik6K (tar.gz, 3.4GB) README file |  Rome16K

(tar.gz, 7GB) Rome16K

(tar.gz, 7GB) README file |

See also

| Worldwide Pose Estimation using 3D Point Clouds |

Acknowledgements

This work was supported in part by NSF (grant IIS-0713185), Intel, Microsoft, Google, and MIT Lincoln Laboratory. We also thank Flickr users for use of their photos.