Grading & Examples

If you want a safe and straightforward path to a full completion grade, we suggest picking at least two non-trivial technical features (e.g., CSG and texture mapping a triangle mesh) and implementing them. We will try to set the completion score to be around implementing two non-trivial featues reasonably well, even in a scene with pretty boring composition.

There are many features that can be completed to different degrees and with different quality and robustness. For an open-ended project, we can't really outline all possible implementations of all features. In the past, we have found that the more specific we are with stating what criteria are necessary to meet full completion credit, the more students will try to game those stated criteria. This happens more for C2 than for any other project, probably because students think it's easier to hide incomplete features when you are only rendering one image.

You can also use the obj loader included in the code to load simple models. The most straightforward way to create such models would be to use Blender. Blender is free and open source, and there are lots of tutorials online that show how to use it. With an un-accelerated ray tracer written in Python you won't be able to render very complex models, but you can certainly still do a lot with low polygon content.

See Exporting from Blender for tips on how to use Blender, an open source cross-platform 3D modeling tool, to help you create and export models. If you use 3D asset files in your scene, be sure to identify what parts of the scene were loaded from files, and how you obtained or created those files! As you will see in several of the examples below, you can do a lot of cool stuff without the aid of 3D modeling software, but tools like Blender are an option for you.

Explaining your work

In addition to your code and final render(s), we will ask you to make a short presentation (e.g., with Powerpoint) explaining what you did. This presentation should describe each of your features and include images demonstrating those features. If the features are obvious from your main scene, then you can use that as a demonstration. However, we suggest saving images that you render along the way while developing features that show off their behavior clearly. The hope is that this will save the TAs from needing to dive into as much of your code. For example, if you

If you do not demonstrate a feature clearly, you may not receive credit for it, even if you have implemented it. The staff does not have the bandwidth to manually investigate 50 open-ended code submissions.

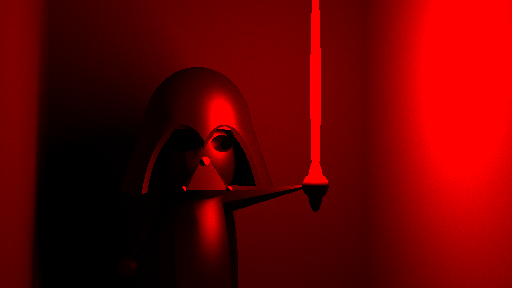

Example From the Dark Side (Raymond Lin & Ben Nozik)

Below is an example of a strong submission from Raymond Lin & Ben Nozik. This submission got a healthy amount of bonus credit for strong composition, as well as impressive use of CSG, path tracing, and importance sampling.

Raymond, who is now one of your TAs, graciously offered to make an example presentation video to illustrate the kind of thing we are looking for, since this is a new part of the submission this year:

Features to Consider

Below we describe some examples of features that could count toward your project.

Excellent Scene Composition:

Good composition matters, and in some cases, could even compensate for technical features that are otherwise a bit lacking. The philosophy here is that making particularly good use of the basics can demonstrate strong understanding of the fundamentals, which deserves its own reward.

Good composition is subjective, and the staff will be evaluating the composition of your project. However, there are some things you can do to help. If you plan to focus on creating a compelling composition, anticipate spending time on trial and error. Try to set up an efficient pipeline for exploring composition early on, so you can quickly iterate and find parameters that look best. If you have an especially nice pipeline in place to pre-visualize scene composition, describe/show it for us in your presentation or report, and explain what your intention with the composition was. These things will help convince us that you have thought seriously about the composition of your submission.

There are lots of ways you can save time by rendering images in pieces and then re-combining those pieces into a larger final render. For example, you could render 4 different low-res images of the scene with interleaved rays that are re-combined at the end into one high-res image (or averaged for anti-aliasing). Or, since light is linear, you could render each light separately and recombine the resulting images with different weights to approximate different light strengths. This requires a bit of setup in advance, but can save a ton of time if you plan to exploration a lot of different composition and lighting choices.

Kirby in Space, by Yingshi Zhu and Mandy Kwok

The example below from a few years ago makes particularly good use of spheres and lighting to create a compelling composition. Note that this submission might be less than full completion if it were submitted this year, as the time and requirements for the project have increased in recent years, but it was strong for its year and remains a good example of how basic elements can be combined to create a compelling scene.

Potential Technical Features

Think about what features to prioritize and how certain feature might complement each other. For example, some features might substantially increase computation time, which could be offset by choosing to implement an acceleration structure for ray intersection (e.g., a k-d tree) as another one of your features.

Your technical feature needs to work (at least excluding very edge cases). Do not claim that you have implemented something that you have not; we will check suspicious claims, and you will be penalized if they are inflated. In particularly egregious (e.g., deliberate-looking) cases, false claims may be treated as academic dishonesty.

Some ideas to consider include:

- Additional geometric primitives: you can add new primitives, implement their ray intersection calls, and use them to create new shapes. Note that a primitive that is simply an array of existing primitives does not count as a new primitive (e.g., the cube is just a collection of simple triangles). One simple shape may not be treated as a complete extra feature, either (e.g., a plane). Shapes like a torus or cone are good choices.

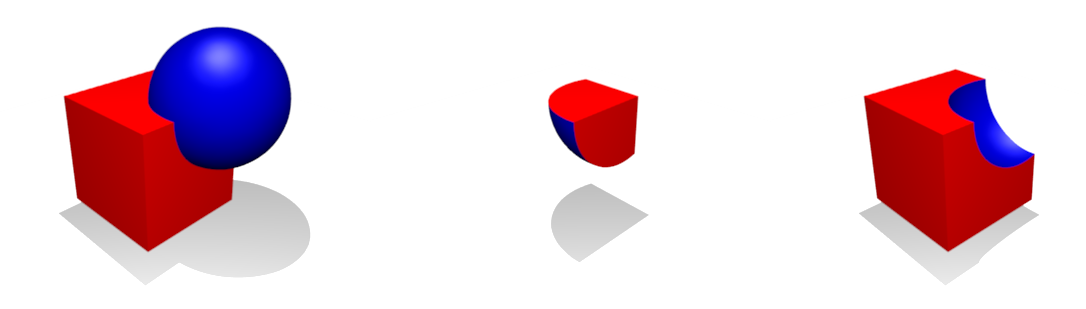

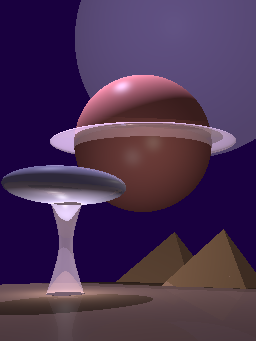

- Constructive solid geometry (CSG): implement boolean operations on existing primitives. E.g., the intersection of two spheres can create a pretty cool flying saucer shape....

- Additional shading modes or phenomena: E.g., refraction, or more general BRDFs, E.g.,

- Fresnel reflection

- Refraction, caustics, lensing

- Scattering

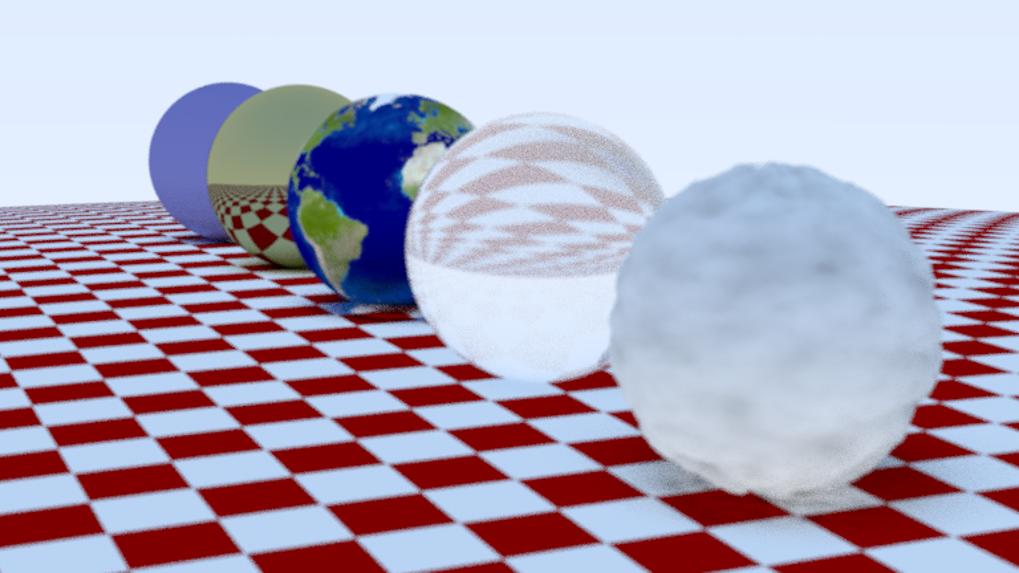

- Surface Texture Mapping: Various types of texture mapping including

- Diffuse texture mapping

- Displacement mapping

- Normal Mapping

- Bump Mapping

- Ray-intersection Acceleration structures: You could implement code to make ray tracing faster. We haven't discussed this much in class, but I have addad a bonus video from Steve on the topic to Canvas, and if you are interested it is certainly google-able as well. Make sure you describe this type of feature clearly in your submission, as it won't necessarily be visible in your image. Also, this feature works best if you use it to ray trace a higher resolution, more anti-aliased, and/or more complex scene with more complicated effects. Report speed gains you get on at least one test scene with your machine in your report. Also note that we can and do check to see that you actually implemented what you say you did here...

After discussion with the TAs, we decided on the following rules with regards to speeding up your ray tracer:

- No GPUs. This is about making sure everyone is working with comparable hardware.

- You may implement things that make your ray tracer run faster that aren't really computer graphics techniques, like multithreading or Cython calls, but these will not be counted as features. They may allow you to explore more ambitious features by tracing more rays quicker, though, so you may consider some of these options anyway.

- We will count geometric acceleration structures like k-d trees toward features.

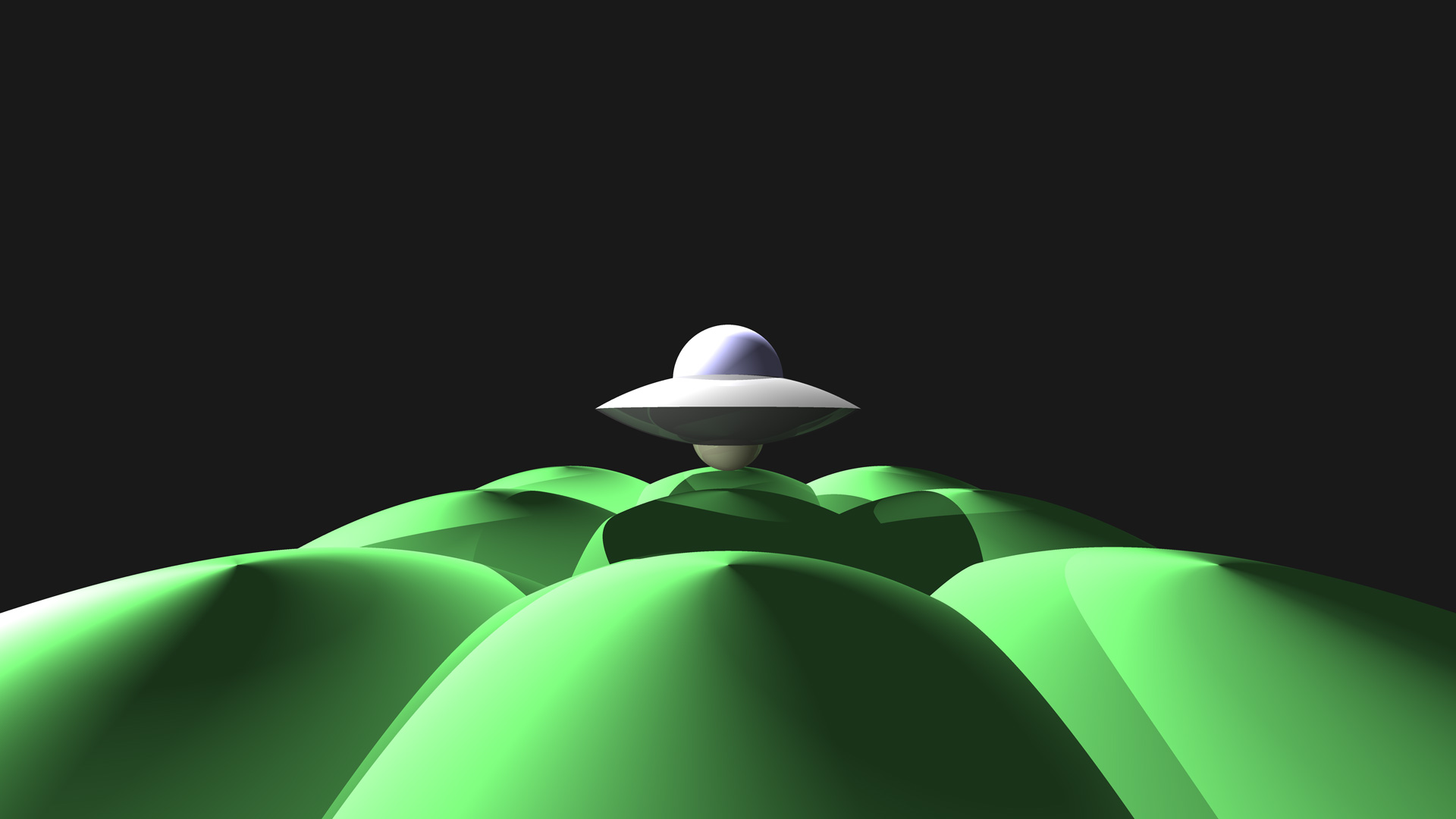

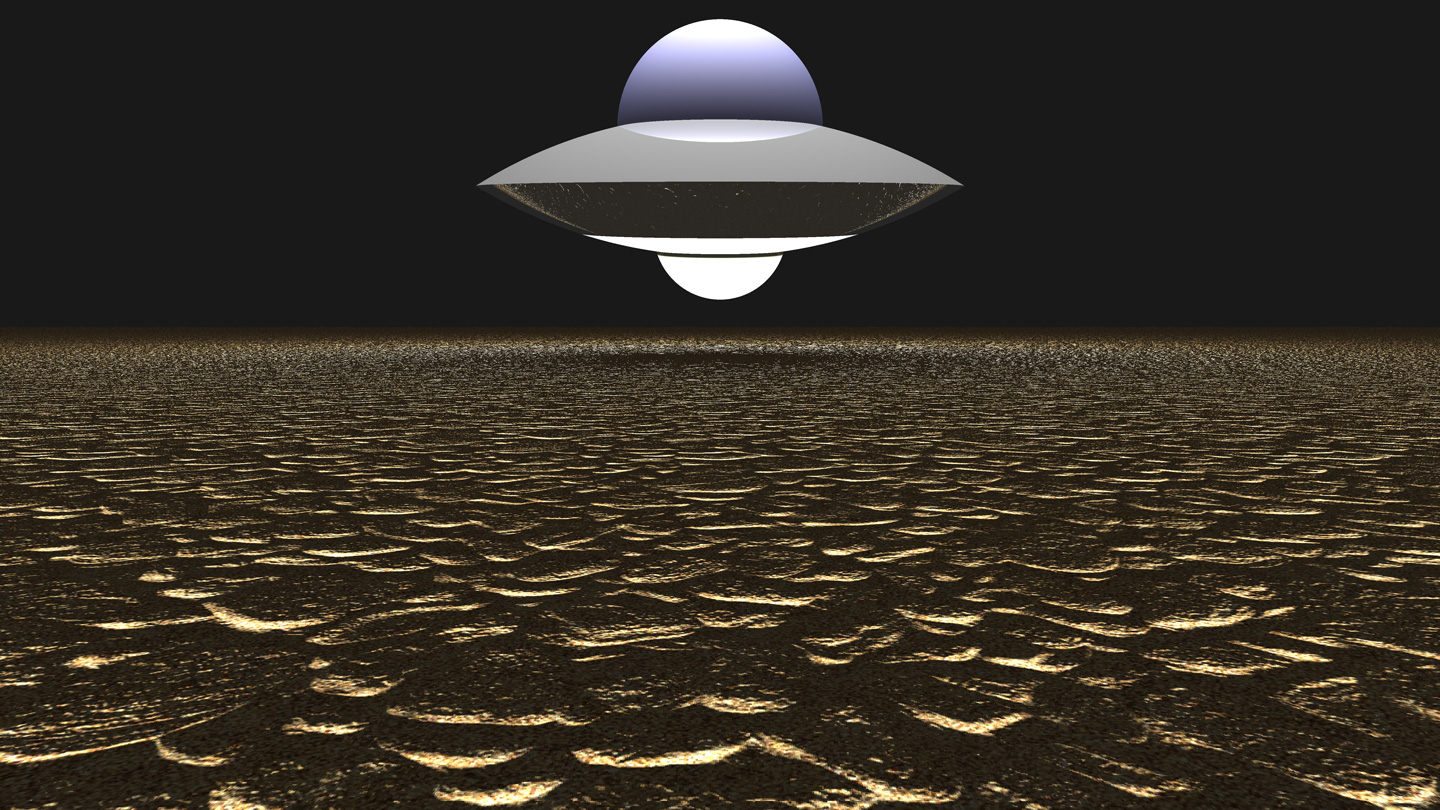

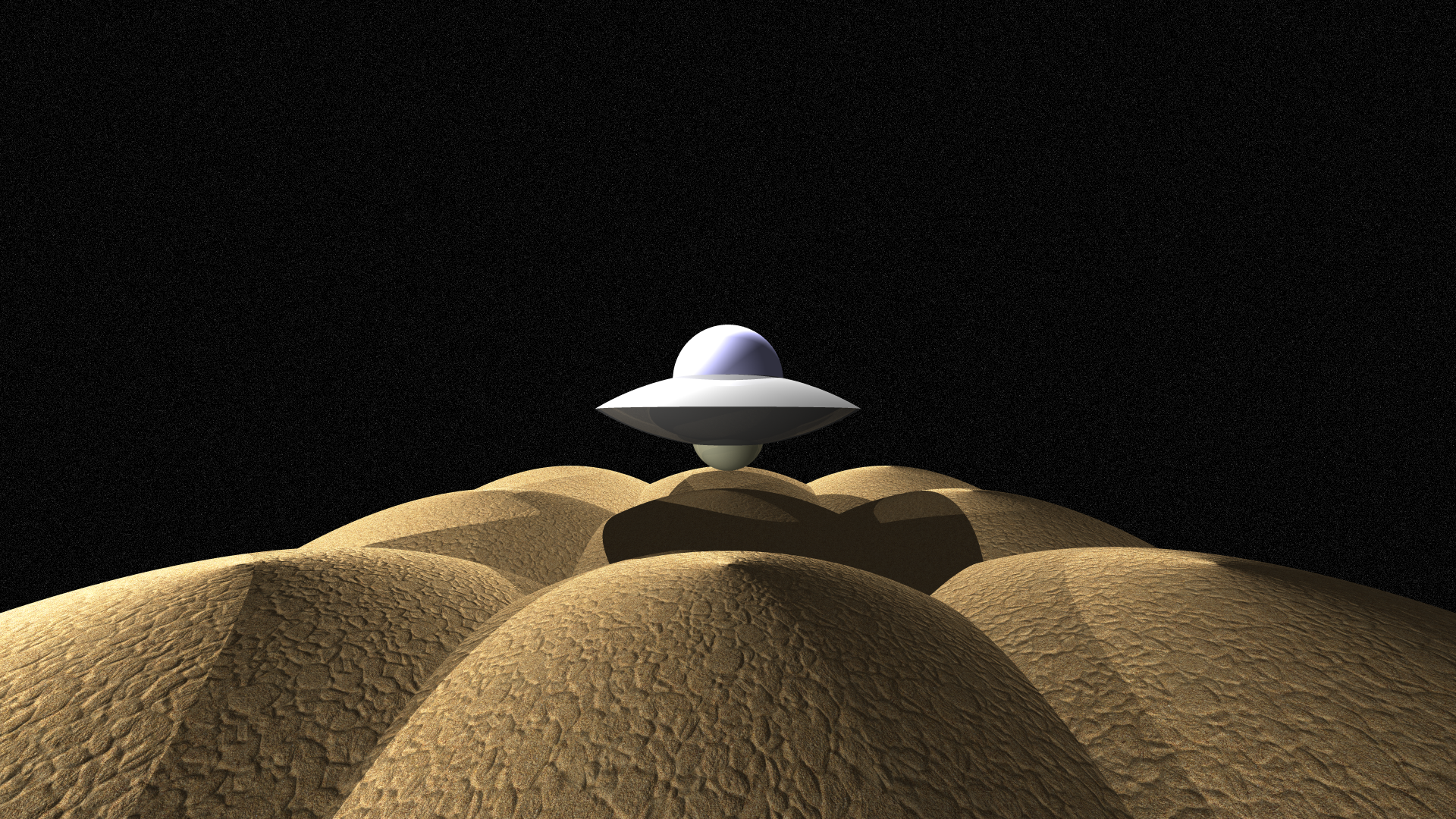

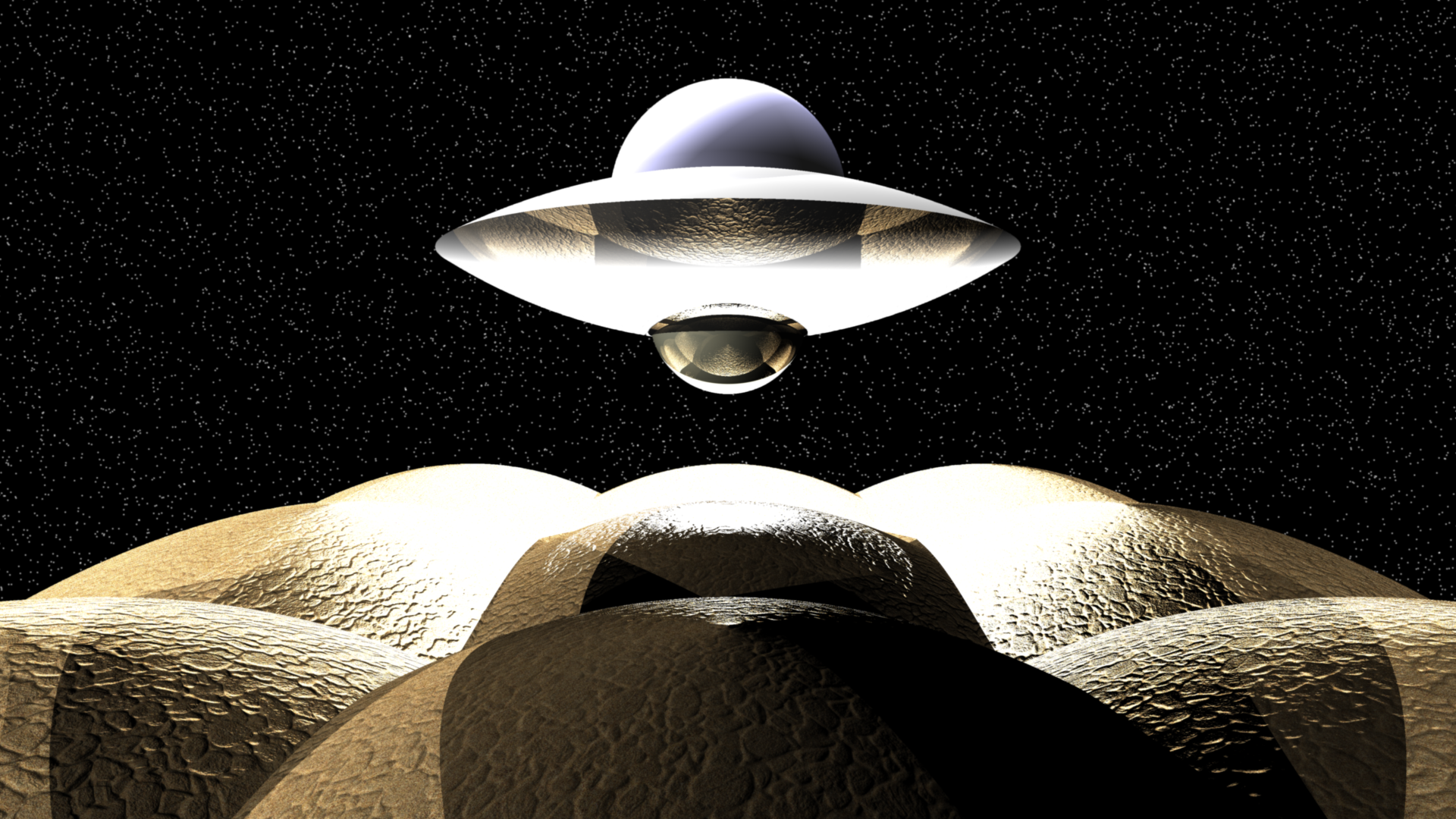

UFO Scenes

The images below show a UFO. The saucer of the UFO was created by using CSG to render the intersection of two spheres, and the cockpit and science-y under-bulb are each an additional sphere.

In our first UFO scene we render the UFO above some paraboloid grass hills. Composition for this image is a bit weak. It technically has two features (CSG and paraboloid intersection), but both are only very basic implementations. It would probably end up getting a bit under full completion credit as-is. However, this could be brought up to full completion if the presentation showed more general use of CSG (e.g., results that demonstrate the union and difference operations working as well, and/or demonstrating CSG on more primitives).

To bring this up to full completion, one could include in their submission images like these:

Though, those images are from Wikipedia, yours should be rendered using your ray tracer. If you claim to have rendered Images you pulled from the Internet, that would be an academic integrity violation, so don't do that...

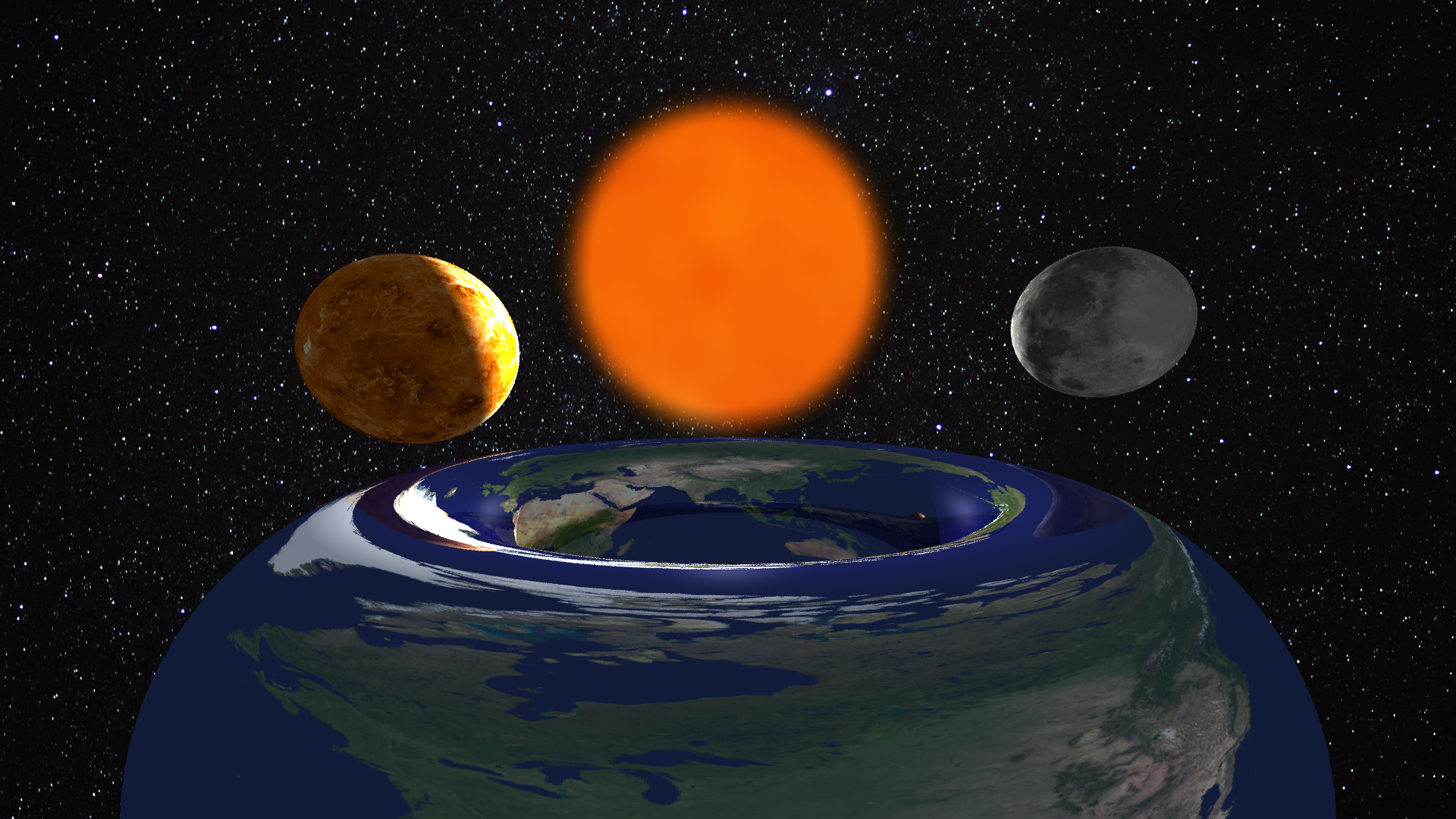

In our second UFO scene we render the UFO above the desert ground (UFOs like the desert). Here, the desert is rendered with a normal map and a diffuse texture map. Again, it technically has two features, but for full credit on these features we would want to see a bit more generalization. Just texture mapping a plane is a bit simplistic. The composition for this image is stronger than the previous one, which might help as well. It uses several lights to create dramatic lighting on the ground and make it look like the bottom of the UFO is glowing. This image would probably get a bit closer to full completion credit, maybe even full completion depending the clarity of the presentation / report.

In this third UFO image, the UFO is rendered above sandy hills that are represented by paraboloids with texture and normal mapping. The lighting here is not as good as the one above, but there are more technical features. In this case, this submission would probably do at least as well as the one above, probably better if it included the supplemental CSG demonstration.

Finally, here we put some more time and effort into composition and lighting. We also added some procedural stars as the background by first rendering a texture, then doing a lookup in this texture for the background color. This submission would probably get some bonus points (esp with the supplemental CSG demonstration). It would be important to summarize how the stars and texture mapping are done in the submitted presentation.

Submissions from Previous Years

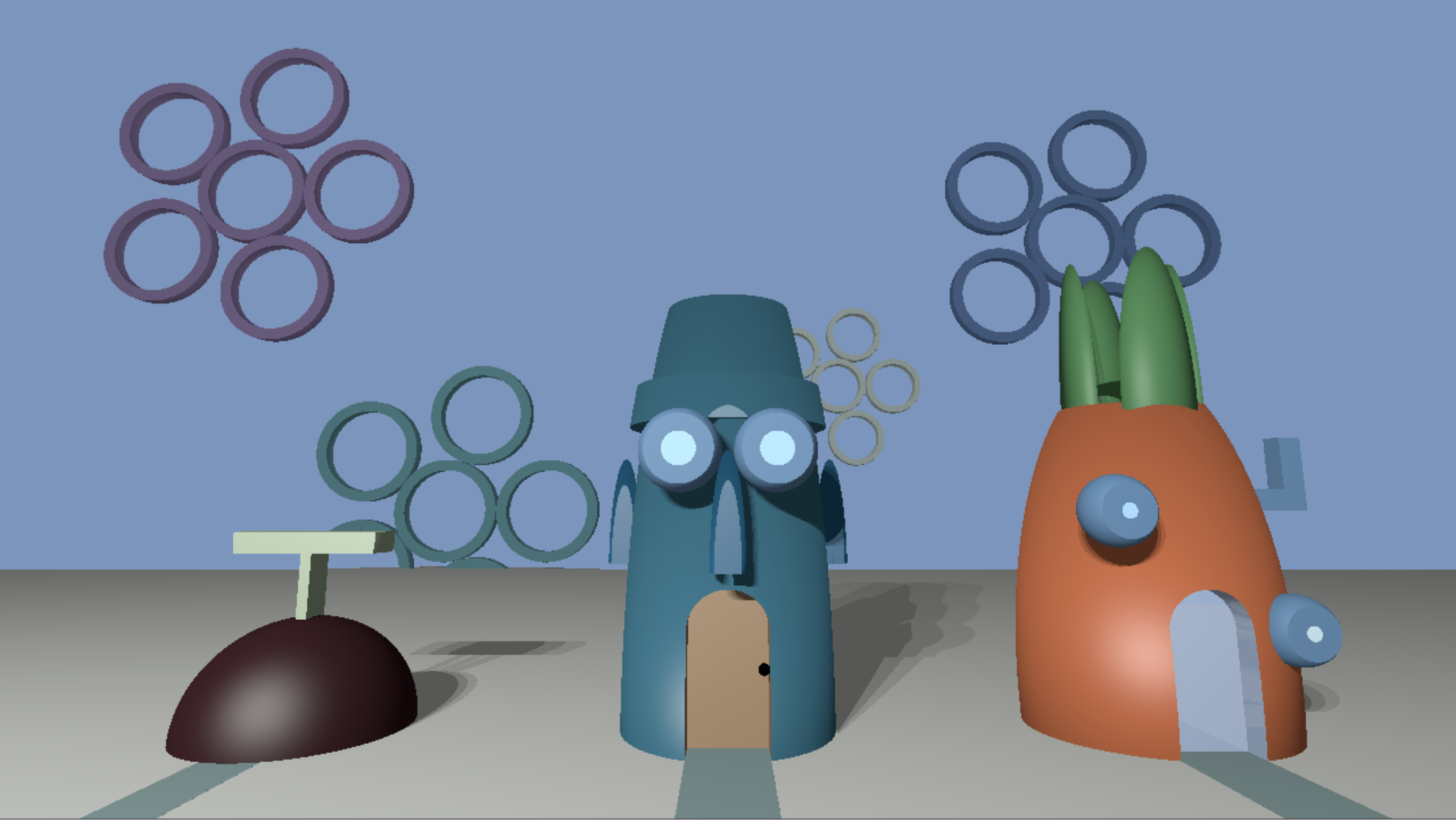

Alan Liu and Selina Xiao (2023)

These two implemented ellipsoid cylinder and cone primitives, constructive solid geometry, texture mapping, and a cool composition.

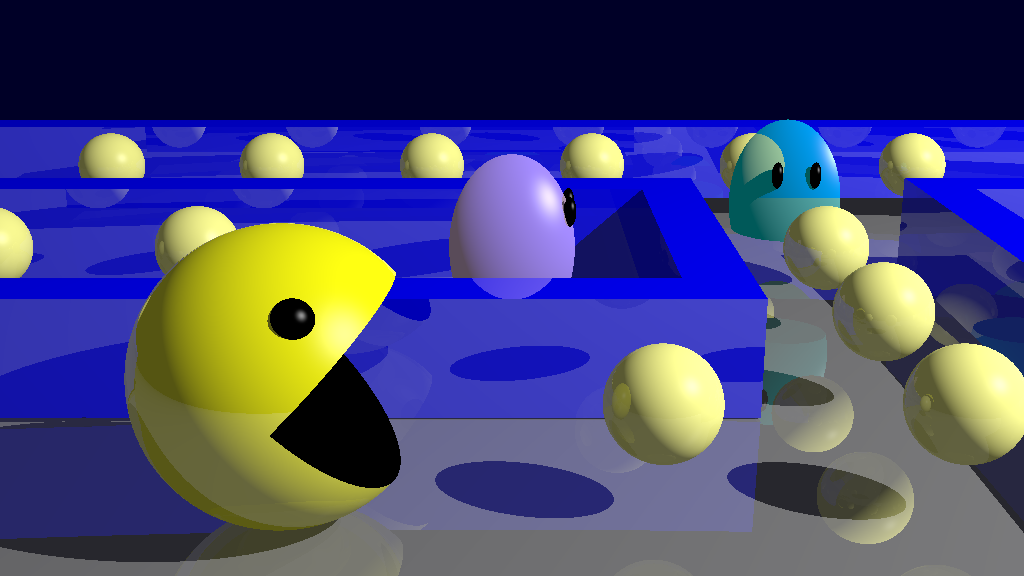

Ethan Yang and Peter Wu (2023)

These two implemented a path tracer, refraction, thin lens approximation with defocus, texture, displacement, and normal mapping... This was a really ambitious submission, and many have failed to implement much less ambitious lists of features.

CSG and Ellipsoids by Ruyu Yan and Becky Hu

This submission has nicely implemented CSG, as well as ellipsoids, some new material, and great composition.

Solar Donut Dolly Zoom by Dubem Ogwulumba and William Ma

The top submission from 2021

Some 2022 Examples

Prithwish Dan & Simon Kapen

Very impressive use of constructive solid geometry. The entire scene is made from CSG by combining various basic shapes.

Sissel Sun & Claire Zhou

Nicholas Broussard & Orion Tian

Jack Otto and Sean Brynjolfsson

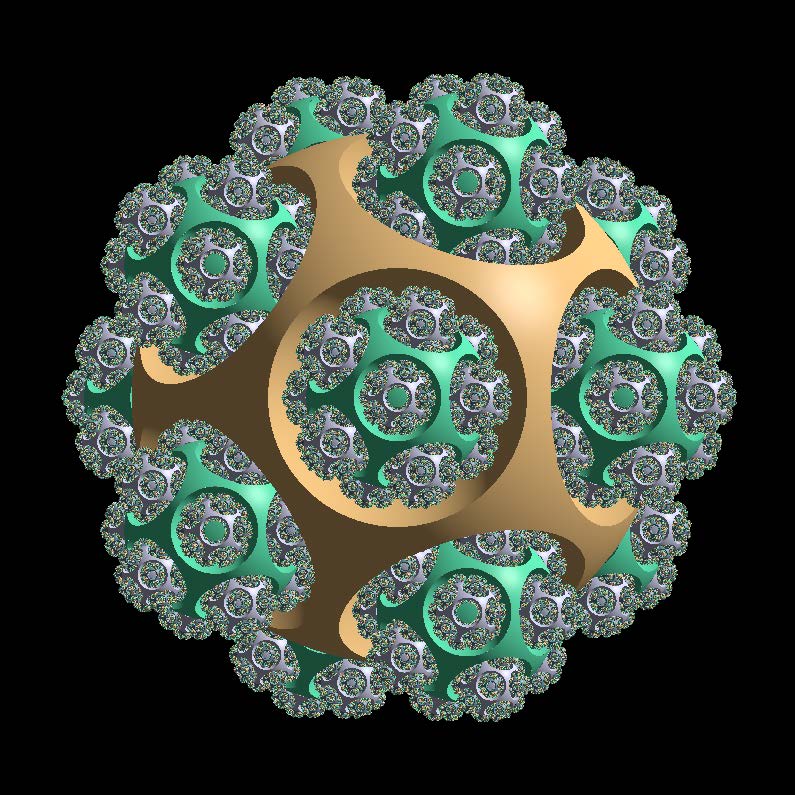

This one was rendered using fractals, which lend themselves well to accelerated ray tracing of very complex geometry.