T-Th 9:05

or

T-Th 11:15

in Olin 155

CS 1110: Introduction to Computing Using Python

Fall 2014

About:

Announcements

Staff

Consultants

Times & Places

Calendar

Materials:

Texts

Python

Command Shell

Terminology

VideoNote

Handouts:

Lectures

Assignments

Labs

Assessment:

Grading

Exams

Resources:

CMS

Piazza

AEWs

FAQ

Python API

Python Tutor

Style Guide

Academic Integrity

Assignment 6:

Clustering

Due to CMS by Thursday, November 20th at 11:59 pm.

Update 11/16/14: We forgot to include the precondition for the method run() in the class ClusterGroup. This is now included in the instructions below. In addition, the file a6.py has been updated.

Big Data is a major computer science topic these days. As computers become both ubiquitous and more powerful, many applications — from science to business to entertainment — are generating huge amounts of data. This data is too large to process by conventional means. That means we need new tools (such as new programming languages) and new algorithms to handle it all. The CS department has several upper level courses (such as CS 4780: Machine Learning) dedicated to this topic.

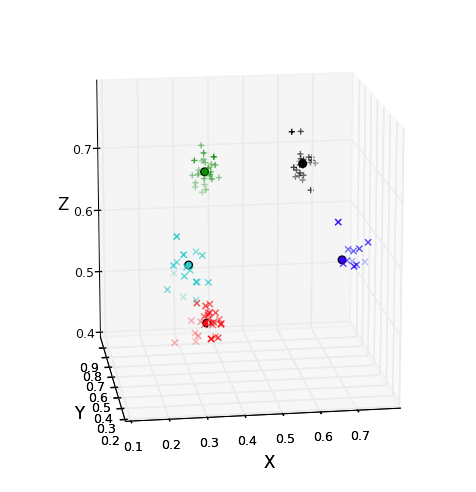

In this assignment you will work with one of the most popular (though not necessarily most efficient) tools for analyzing large amounts of data: k-means clustering. In k-means clustering, you organize the data into a small number (k) of clusters of similar values. For example, in the picture to the right, there are a lot of points in 3-dimensional space. These points are color-coded into five clusters, with all the points in a cluster being near to one another.

If you look at the wikipedia entry for k-means clustering, you can see that it has many, many applications. It has been used in market analysis, recommender systems (e.g., how Netflix recommends new movies to you), and computer vision, just to name a few. It is an essential tool to have if you are working with data. With the skills and knowledge you have been acquiring in CS 1110, you are now able to implement it yourself!

Learning Objectives

This assignment has several important objectives.

- It gives you practice working with loops (both for-loops and while-loops).

- It gives you practice with writing your own classes.

- It illustrates the importance of class invariants.

- It gives you practice with using data encapsulation in classes.

- It gives you practice with both both 1-dimensional and 2-dimensional lists.

- It gives you experience with designing helper functions to structure your code properly.

- It demonstrates that you have the ability to implement a powerful data-analysis algorithm on real data.

Table of Contents

Authors: W. White, D. Murphy, T. Westura, S. Marschner, L. Lee

- Academic Integrity and Collaboration

- K-Means Clustering

- How to Work on the Assignment

- Recommended Implementation Strategy

- Finishing the Assignment

Academic Integrity and Collaboration

Please review CS1110's academic integrity policies. If you talk to other students, please cite the collaboration in your source code. In addition, if you look at other sources online on K-means clustering, and use that to help with your code, please cite that as well. We have given this assignment before (though we have changed it up a bit to make it easier), so please do not look online for previous years solutions.

Depending on the amount of collaboration, we may or may not take off points. We generally reserve point deductions for sharing large amounts of code, and not just a few lines. However, we are not going to explain our deductions ahead of time. The only way to guarantee that you do not lose points is if you do not look at anyone else's code.

The bigger problem is if you do not cite your sources or collaborators at all. If we discover large similarities between two submissions, and neither of you cited the other, we will be forced to hold an Academic Integrity Hearing. Neither of us wants that.

Collaboration Policy

You may do this assignment with one other person. If you are going to work together, then form your group on CMS as soon as possible. If you do this assignment with another person, you must work together. It is against the rules for one person to do some programming on this assignment without the other person sitting nearby and helping.

With the exception of your CMS-registered partner, is not a good idea to look at anyone else's code or show your code to anyone else, in any form what-so-ever. If you do see someone else's code, and it affects how you write your own, then you must cite the source in your submission.

K-Means Clustering

In this section, we describe what cluster analysis is, and how we use k-means clustering to implement it. Cluster analysis is the act of grouping together similar points into groups. For example, one of the applications in this assignment is clustering for epidemiological analysis. You will get data with 2-dimensional longitude-latitude pairs of where birds carrying different strains of avian flu were found. You will cluster these into groups that give insight into different regions where the flu strain appeared.

When we say point, it could be a 2-dimensional point, a 3-dimensional point, a 4-dimensional point, and so on. In fact, we could have points of arbitrary dimension, particularly if the data is not spatial. For example, one of the data sets for this assignment is a fictional market analysis of candies. Each candy is a 4-dimensional point that represents how sweet, sour, nutty, and crunchy it is.

Distances between Points

When we cluster points, we use the Euclidean Distance to measure the distance between them. You may already know how to compute the distance between two 2-dimensional points: d(p,q)=√(px−qx)2+(py−qy)2 or between 3-dimensional points: d(p,q)=√(px−qx)2+(py−qy)2+(pz−qz)2. These are special cases of the general definition: given two n-dimensional points p=(p1,p2,…,pn) and q=(q1,q2,…,qn), the Euclidean distance between them is:

d(p,q)=√(p1−q1)2+(p2−q2)2+⋯+(pn−qn)2For example, suppose we have two points: p=(0.1,0.2,0.3,0.4) and q=(0.0,0.2,0.3,0.2). Then:

d(p,q)=√(0.1−0.0)2+(0.2−0.2)2+(0.3−0.3)2+(0.4−0.2)2=0.7071…Cluster Centroids

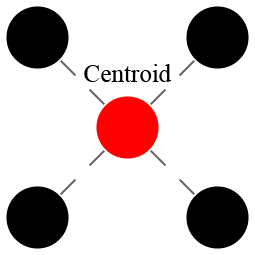

Given a set of points, we can identify its centroid. The centroid is the “center of mass” of the cluster, which is to say, the average, or mean, of all of the points in the cluster. The centroid might happen to be one of the points in the cluster, but it does not have to be. For example, the picture to the right is an example of a 4-point cluster (black circles) with a centroid (red circle) that is not one of the points in the cluster.

To compute a centroid, we average. Remember that to average, you add up a collection of things and divide by the total. The same is true for points; we add up all the points, and then “divide” the result by the total number of points. Adding two points results in a new point that is the coordinate-wise addition of the two input points:

p+q=(p1+q1,p2+q2,…,pn+qn).When we divide a point by a number, the division is done coordinate-wise, as follows:

p/a=(p1/a,p2/a,…,pn/a)The Algorithm

The idea of k-means is quite simple: each point should belong to the cluster whose mean (centroid) it is closest to. But, the centroid of a cluster depends on which points you put into it. This creates a kind of chicken-and-egg problem, which is solved using an iterative approach: first assign points to clusters, then compute new centroids for these clusters, then re-assign points using the new centroids, and so on.

To make this general idea into a precise algorithm we can implement, we break down k-means clustering into several steps. Throughout, let n be the dimension of the points.

1. Pick k Centroids

Our goal is to divide the points into k adjacent groups. The first step is to guess k centroids. Throughout these instructions, we will refer to the centroids as m1,m2,…,mk. (The letter m stands for “mean”, or centroid, which is where the algorithm's name comes from.)

Remember that the centroids are themselves n-dimensional points. Hence, for any mean mj, where 1<=j<=k, we have

mj=(mj,1,mj,2,…,mj,n)So each mj,i represents the ith coordinate of the jth centroid.

To pick k centroids, all we do is choose k points from our original dataset. We will choose these points at random, as if drawing cards from a deck. This is referred to as the Forgy Method, and it is one of the most popular ways to start k-means clustering.

2. Partition the Dataset

Now that we have k centroids, we assign every point in the data set to a centroid. We do this by matching each point to the nearest centroid mj, and then have the points assigned to each centroid form a new cluster. This is known as partitioning because each point will belong to one and only one cluster.

If there happens to be a tie between which centroids are closest to a given point, we arbitrarily break the tie in favor of the mj with smallest j. If we put the centroids in a list, this would be the centroid that comes first in the list.

There will then be k sets S1,S2,…,Sk, where set Sj is the cluster consisting of all the points associated with centroid mj.

3. Recompute the Means

Once we have the sets S1,S2,…,Sk, we need to compute a new mean mj for each Sj. This new mean is just the average of all of the points in that set. Let

Sj={q1,q2,…,qcj}be the set of points associated with centroid mj, where cj is the number of points inside of Sj. Then the new mean is the n-dimensional point

mj=1cj(q1+q2+⋯+qcj).The formula above uses the rules for adding points and dividing points by numbers that we discussed above. If you do not understand this formula, please talk to a staff member immediately.

Now, because we have recomputed the means, some points might be closer to the centroid for a cluster they are not currently in than to the centroid for the cluster they are in. To fix this, we repartition the dataset by going back to step 2 and proceeding as before.

4. Repeat Steps 2 and 3 Until Convergence

The clustering algorithm continues this process: recompute the means and repartition the dataset. We want to keep doing this until the means stop changing. If all the means are unchanged after step 3, we know that partitioning again will produce the same clusters, which will have the same means, so there is no point in continuing. When this happens, we say that the algorithm has converged.

Sometimes it can take a very long time to converge — thousands of thousands of steps. Therefore, when we implement k-means clustering, we often add a “time-out factor”. This is a maximum number of times to repeat steps 2 and 3. If the algorithm does not converge before this maximum number of times, we stop anyway, and use the clusters that we do have.

Classes Used in Our Implementation

Classes are ideal for representing complex mathematical objects. For example, we saw in class how to use classes to represent rectangles or times of day. There are several interesting mathematical objects in the description above (points, centroids, sets/clusters) and we could use classes to represent all of these.

Deciding which of these things to create classes for is the core of object-oriented software design. For this assignment we have made these decisions for you, since it is not always easy to hit the right balance of complexity against structure the first time, and we want you to spend your time implementing rather than redesigning.

For our implementation of k-means, we decided not to use classes to represent points and centroids, because Python's built-in lists serve well enough. In particular, you should not use the Point class in tuple3d. That class only supports 3-dimensional points, but we would like to support points in any dimension.

In the end, we decided to create classes for three important concepts in k-means. First there is the entire dataset, represented by an instance of the class Dataset. Second, there is a cluster of data, represented by an instance of the class Cluster. Finally, there is the set of clusters created by k-means, represented by an instance of the class ClusterGroup.

Points are Lists

Throughout this assignment, a point will be represented as a list of numbers; both ints and floats are allowed. This is true for centroids as well. For example, here is an example of a possible centroid if we were working with 4-dimensional points:

mj is represented as the list [0.1,0.5,0.3,0.7].

In this example, if x stores the name of the list, then mj,2

(using the notation from a previous

section) is x[1], or 0.5, while mj,4 is x[3], or 0.7.

Note that our mathematical notation starts at 1, while list indices start at 0.

Class Dataset

The k-means algorithm starts with a dataset—a collection of points that will be grouped into clusters. This dataset is stored in an instance of the class Dataset. This class is very simple; it just holds the list of points, so its most important attribute is:

- _contents: A list of points inside this dataset

For example, if a dataset contains the points (0.1,0.5,0.3,0.7) and (0.2,0.0,0.2,0.1), then the attribute _contents is

[[0.1,0.5,0.3,0.7], [0.2,0.0,0.2,0.1]]

The points are all lists of numbers, and they all have to be the same length; remember that this length is the dimension of the data. We keep track of this dimension in a second attribute:

- _dimension: The dimension of all points in this dataset

The fact that all the points are supposed to have the right dimension is expressed by a class invariant: “The number of columns in _contents is equal to _dimension. That is, for every item _contents[i] in the list _contents, len(_contents[i]) == _dimension.”

You might argue that storing the dimension separately is redundant, since you could always look at the points in _contents to find it, but we would like the dimension to be defined even before we add any points to the dataset.

One of the things that you might notice about these attributes is that they all start with an underscore. As we mentioned in class, that is a coding convention to indicate that the attributes should not be accessed directly outside of the class Dataset. In particular, neither Cluster not ClusterGroup should access these attributes at all. When those two classes do need to access these attributes, they should do it through getters and setters. One of the things you will do in this assignment is implement these getters and setters.

Class Cluster

The next idea in the algorithm that we have defined a class for is a cluster Sj. To support this type, we defined the class Cluster. Objects of this class will actually hold two different values: the centroid mj and the set Sj. We do this because the centroid and cluster are associated with one another, so it makes sense to store them in a single object. We need two attributes corresponding to these ideas:

- _centroid: A list of numbers representing the point mj

- _indices: A list of integers that are the indices where the points of this cluster can be found in the dataset.

Note that we are not storing the points belonging to a cluster; we are only storing the indices of the points in the dataset. This simplifies Cluster because it does not need to worry about maintaining lists of lists. Code that works with clusters does need to be able to get to the points, however, so one of its instance variables is a reference to the dataset:

- _dataset: The dataset of which this cluster is a subset.

Once again, these attributes start with an underscore, so we want getters and setters if we are going to access them in the class ClusterGroup (it is okay if a method in Cluster accesses one of its own attributes with an underscore in it). So again, you need getters, like getCentroid and setters like addIndex. There is also a special getter getContents that returns a copy of the points to be used by the visualizers.

In addition to the getters and setters, the class Cluster is the logical place to put code that does computations involving a single cluster. Reading the description of the k-means algorithm above, we find that the two core operations with clusters are computing the distance from a point to a cluster's centroid and finding a new centroid for the cluster. These operations are handled by the two most important methods of the Cluster class:

- A method to find the distance from a point to the cluster (distance)

- A method to compute a new centroid for a cluster (updateCentroid)

Finally, this class contains the special Python methods __str__ and __repr__. This methods are to help you with printing clusters more informative while debugging.

Class ClusterGroup

Since experimenting with the number of clusters is an important part of using the algorithm, we want to create several clusterings of the same data. For this reason, the final class represents a cluster group of the dataset; that is, a set of clusters that together cover the whole dataset. The data stored in this class is simple: a list of clusters, and as with Cluster, a reference back to the dataset.

- _dataset: The dataset we are clustering

- _clusters: The list of current clusters S1,…,Sk

Once again, we have getters and setters for these attributes. But the important methods of class ClusterGroup are the core of the k-means algorithm.

- A method to partition the dataset, implementing step 2 of the algorithm (_partition)

- A helper method for _partition to find the nearest cluster to a point (_nearest_cluster)

- A method to update all the cluster centroids, implementing step 3 (_update)

Finally there are the methods that orchestrate the whole process:

- A method that executes one step of the process, updating the centroids and re-partitioning the data (step)

- A method that computes a clustering, from start to finish (run)

So a user of the class who just wants to cluster a dataset into k clusters would create a Dataset, add all the points to it, create a ClusterGroup with k clusters, and then call run on that cluster group.

You will notice that some of the methods have names starting with an underscore. The meaning is the same as with attribues: these methods are meant to be used internally rather than called directly by code outside the ClusterGroup class.

How to Work on the Assignment

This assignment involves implementing several things before you'll have the whole method working. As always, the key to finishing is to pace yourself and make effective use of all of the unit tests and the visualizers that we provide.

Assignment Source Code

You should download the zip archive code.zip from this link. Unzip it and put the contents in a new directory. This time, you will see that this directory contains several things:

a6.py- This module contains the whole implementation of the clustering algorithm. It is the only file that you will need to modify and submit.

a6test.py- This file is a unit test to provide you with even more help with this assignment. Is not a 100% complete unit test, but you are not required to finish it. We describe this in more detail below.

plot3d.py- This is a GUI that will help you see what is going on, using an example with 3D points. We describe how it works below.

mapcluster- This is a folder with several files. It provides another GUI that will let you work with geographic data where the points are 2D and represent (longitude, latitude) pairs. We describe how it works below.

datasets- This is a folder with many sample data sets. They are useful for working with the two GUIs provided. We explain them in the discussion of the two GUIs below.

Pacing Yourself

This assignment has a problem: there is an exam in the middle of the assignment. There is not much we can do about this. In alternating years, either this assignment or the color model assignment has a prelim in the middle. This year it is clustering.

To make matters worse, parts of this assignment will be covered by the exam. In particular, both the class Dataset (in its entirety) and Part A of Cluster are fair game. You should do everything that you can to finish these parts of the assignment before the due dates.

Once again, at the end of each part, we have a suggested completion date. While this is not enforced, we recommend that you try to hit these deadlines. If you cannot make these deadlines, it might be a sign that you are having difficulty and need some extra help. In that case, you should see a staff member as soon as possible.

Using the Unit Test

We have provided a unit test module that runs the three classes in the kmeans module through their paces. It is split into several phases corresponding to implementation strategy below. If you follow our suggested strategy for implementing the assignment, then the unit test will help you check that one part is okay before moving on to the next step.

This unit test script is fairly long, but if you learn to read what this script is doing, you will understand exactly what is going on in this assignment and it will be easier to understand what is going wrong when there is a bug. You are encouraged to sit down with a staff member to talk about the unit test, to help you understand it better.

With that said, this unit test is not complete. It does not have full coverage

of all the major cases, and it may miss some bugs in your code. In past semesters,

we have seen many unusual errors that were not caught by this unit test. You may

want to add additional tests as you debug, and that is fine. However, we do not want

you to submit the file a6test.py when you are done, even if you

made modifications to the file.

Using the Visualizers

There are two visualizers provided with this code. They help you see what your implementation is doing. They will only work properly once you have completed the entire assignment. However, even if you have not completed the assignment, they are still useful and will show some information.

Visualizing K-Means on 3D-points

The visualizer plot3d.py is designed to be run as a script. To get it working, navigate to the assignment directory and type

python plot3d.py

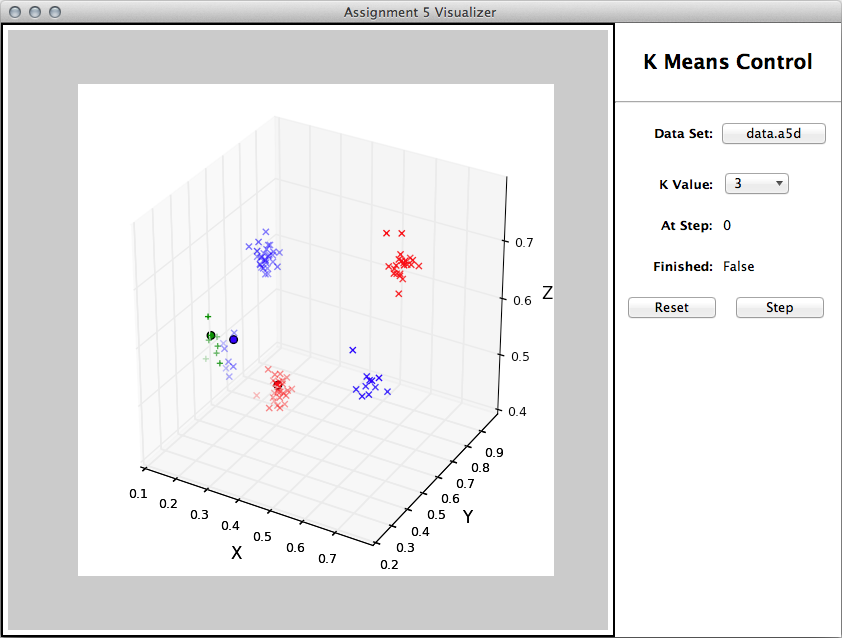

When you start the visualizer for the first time, it will look like the window shown below. You will see a empty plot of 3-dimensional axis, as well as some buttons and menus to the right. If you click on the window with the plot, you can move the axes. You will find this useful when you are trying to look at three-dimensional data.

This GUI is not going to do anything until you start implementing the various parts of this assignment. Once you have most of the assignment completed (up to Part C of ClusterGroup), you can use the visualizer to perform a k-means clustering on a three-dimensional data set.

Once you have everything working, click on the button to select a file and load the file sample.csv. Your initialization step will pick three random centroids and partition the data. The result will look something like the following:

Note that each cluster is a different color. The points in the cluster are crosses, while the solid circle is the centroid.

You can use the pull-down menu to change the k-value. There is a limit on the number of k values you can choose because there are only so many different colors that we could think of. Whenever you change the k, it will reinitialize the centroids. It will also reinitialize the centroids when you hit the reset button.

Each time you hit the step button, it will perform step 3 of the k-means algorithm. You can watch the centroids change as you hit the button. When the algorithm converges, you will see the word "True" after Finished and the step button will no longer do anything. Below is a picture of a finished computation with final versions of the clusters.

This visualizer will work with any CSV file whose last three values on each line are numbers. For example, the data files candy.csv and small_candy.csv contain the 4-dimensional data (sweetness, sourness, nuttiness, and texture) that we described above. The file plot3d.py can visualize these as well, but only the last three values (sourness, nuttiness, and texture).

This visualizer will not work with the map data H3N8.avian.usa.csv and H4N6.avian.usa.csv. The files contain map data, and the rows only have two numbers.

Visualizing K-Means on Longitude and Latitude

The second visualizer is contained in a folder mapcluster. To get it working, you actually run the folder as a script. In other words, navigate to the assignment directory (not the folder mapcluster). Then type

python mapcluster

This will execute the script __main__.py inside of this folder, which in turn imports the other modules inside of the folder. We did this because we did not write all of the GUI ourselves. Some of the files in this folder were written by other people, so it was easier to organize the GUI as a folder than as a single file.

When you run this program it will look very similar to plot3d.py. The main difference is that it displays a map (which will be blank until a dataset is loaded) of the world.

To try it out, load either the data set H3N8.avian.usa.csv or H4N6.avian.usa.csv. These files contain data about influenza in birds. Each data point indicates a location where a bird was found that had a particular strain of avian influenza (bird flu). We might want to understand geographical differences in what regions these two strains tend to appear in.

These files contain repeated points for when multiple specimens were found in the same location.

So the location of a cluster centroid may not be in what looks like the geographic center of its

points, because you cannot see when multiple points are on top of each other.

These files were created from publicly available data accessible from the Animal Surveillance section

of the

Influenza Research Database. See

Squires et al. (2012) Influenza research database: an integrated bioinformatics resource for

influenza research and surveillance. Influenza and Other Respiratory Viruses,

DOI: 10.1111/j.1750-2659.2011.00331.x.

Asserting Preconditions

Once again, we want you to get into the habit of asserting preconditions. Unlike the last assignment, we are not making a distinction between enforced and normal preconditions. If it is a precondition, you should enforce it with an assert statement.

Some of the preconditions are quite complete this time. For example, method addPoint in Dataset requires that point is a list of numbers. There are two different things to check here:

- point is a list.

- Every element of point is a number (int or float)

The second part requires a for-loop (or equivalent) to check, and we do no know how to mix for-loops and asserts. The best way to handle this precondition is to write a helper function that returns True if these are both true, and False if not. We have provided this helper is_point for you in a6.py. To use it in the method addPoint, you simply write

assert is_point(point)

As with Assignment 4, we are not requiring any error messages with you assert statements. Note that this is not the only part of the precondition in addPoint to enforce, but it is hardest part.

As you work on this assignment, you may decide to write more helpers to enforce your preconditions. When you write your helpers, you need to remember to write a specification for each helper. The helpers should not have any preconditions. They should be prepared for any argument. It is not very useful to check preconditions with function or method that has its own preconditions.

Recommended Implementation Strategy

In the file a6.py we have provided the definitions for the three classes discussed above. You have the class invariants and all method specifications, but almost all the method bodies are just stubs. Your task is to implement all the stubbed methods in these classes. We recommend starting with Dataset, since that is needed by the other classes. Then implement Cluster, and test that all its methods are working correctly. Finally implement and test ClusterGroup. When that is working correctly, your assignment is finished.

The Dataset Class

This class has six methods: the initializer, four getters and a setter. The specifications of all of these methods are included in the file a6.py. The getters are the most straight-forward. Most of the time, you just need to return the attribute.

The only tricky getter is getPoint. Remember that when you return a list, you are returning the name of the folder. So, once we get a point from the Dataset, we could change its contents, potentially corrupting the dataset. getPoint always copies the point before it returns it, ensuring that we cannot accidentally corrupt the data set. The unit test a6test.py has a test to make sure that you did this correctly.

Similarly, when you implement the initializer, note that the specification says that the class is supposed to keep a copy of the parameter contents. In this context this means that you need to set the _contents attribute to a new list that contains copies of all the points in contents. Just writing contents[:] won't do it—that creates a new list that has references to the same points. A question to consider is, how do you copy all those individual lists? You may need a loop of one form or another.

Finally, when you implement addPoint, be sure that it also adds a copy of the provided point to the dataset.

Asserting Preconditions

As a last step, you should go back to every method with preconditions and assert those preconditions. This includes both the initializer and the setters. Recall that preconditions are how we preserve the invariants, so asserting the preconditions prevents the invariants from being violated.

As we explained above, you may want helper functions to assert the preconditions. For example, the initializer requires that contents is a 2D list of either ints or floats. There are a three things to check here:

- contents is a list.

- Every element of contents is a point (list of numbers).

- Every element of contents has the same length.

It would be best to write a helper function called is_point_list to check these three.

Testing it Out

Once you complete the Dataset class, you can run the unit test script. If you see output that says

class Dataset appears correct

then you can be pretty confident about this part.

You can also try your code in the visualizers. Run either visualizer and load a data file as described above. The points will only be displayed as black crosses, and the algorithm controls will not work, but you will be able to see the data.

If you click on the Reset button, you will see some error messages in the terminal saying FAILED VISUALIZATION. This is perfectly normal. These error messages are the visualizer letting us know that it was not able to form clusters yet and that it shifted down to testing just the Dataset part of your code only.

It is extremely important that you finish Dataset by Sunday, November 9. This guarantees that you have written a complete class before the exam, as this will be one of the questions on the exam.

The Cluster Class

The class Cluster is a little more involved that Dataset, so we have broken it up into two parts: Part A and Part B. Each part has its own test procedure in a6test.py, so you can fully test one part before moving on to the next.

Part A: Getters and Setters

The specification for the Cluster class mentions the following instance attributes:

Instance Attributes:

_dataset [Dataset]: the dataset this cluster is a subset of

_indices [list of int]: the indices of this cluster's points

in the dataset

_centroid [list of numbers]: the centroid of this cluster

Extra Invariants:

len(_centroid) == _dataset.getDimension()

0 <= _indices[i] < _dataset.getSize(), for all 0 <= i < len(_indices)

Once again, these attributes begin with an underscore, which means that you will need to implement getters and setters. Once again these are straight-forward. The only unusual one if getContents. the return value is supposed to be a list containing (copies of) the points themselves, not the indices of the those points. You will need to loop over the indices, creating copies of the corresponding points from the database and collecting those copies in a newly created list.

You will also need to implement the initializer in this part of the assignment. When you are done, remember to assert all your preconditions.

Part B: distance and updateCentroid

The last two methods are more complex methods that are necessary for the k-means algorithm. To implement distance, refer to the definition of euclidean distance given earlier in this document.

The function updateCentroid is the most complex function in this class. You will need to first go through all the points, adding up their coordinates. You need the sum of the first coordinates of all the points in the cluster, the sum of all the second coordinates, and so on. After that, you will need to divide by the number of points in the cluster. Finally, you need to decide whether the centroid has changed. The function numpy.allclose is mentioned in the specification. This function takes two lists of numbers and tells you whether the corresponding numbers from the two lists are all very close in value.

When you are done, remember to assert all your preconditions.

Testing it Out

Once you complete each of these two parts, run the unit test. If everything is working, you will get the appropriate message.

Once you complete both parts, you will see that the visualizers have a lot more functionality. When you load up a data file and click the Reset button, you will see all points in the data set as blue markers in the plot window. In addition, you will see a blue circle with black border representing an initial centroid.

There will still be the error message FAILED VISUALIZATION printed out somewhere in the command shell. This is normal.

Ideally, you should try to finish Cluster by Tuesday, November 10. That means you will have completed two classes and can spend the rest of the week studying for the exam. At the very least, you should complete Part A before the exam, leaving only Part B for after the exam.

The ClusterGroup Class

The last class to implement is the ClusterGroup class. As before, you need to implement the methods following their specifications. The methods in this class do a lot, but because of all the work you did in the lower-level classes, the code for these methods are reasonable.

Once again, we have broken this assignment up into several parts. Each part has its own test procedure in kmean_test.py.

Part A: The Initializer

The initializer has the job of setting up the clusters to their initial states so that the first iteration of the algorithm can proceed. Each cluster needs its centroid initialized, but will start with no data points in it until the first partitioning step. You will need to create a list of Cluster objects, and each one needs to be created using a point for its centroid. We draw these points at random from the dataset, but we do not want to select the same point twice, so simply selecting k random indices into the dataset will not do it.

Fortunately, in the random package there is a function random.sample that selects a number of items from a list. The returned list has no duplicates in it. You should use this function to select which points from the dataset will become the seeds of clusters.

The specification for the initializer also supports the caller specifying exactly which points to use as cluster seeds. This is primarily for testing. Your code will have to do something a bit different depending on whether the seed_inds parameter is supplied. Once again, remember to assert all your preconditions.

There is only one getter to implement in Part A. Since ClusterGroup accesses other classes, but no classes access it, it does not need a lot of getters.

Now that the exam is over, try to finish Part A of ClusterGroup by Saturday, November 14. You should also have Part B of Cluster done by this point as well.

Part B: Partitioning

In the next step, you implement the two methods necessary to partition the data set among the different clusters. When implementing the _nearest_cluster method, you should use a loop over the list of clusters, since they are the possible candidates. During the execution of your loop, you may want to keep track of both the nearest cluster found so far and the distance to the nearest cluster found so far. An interesting question (with more than one good answer) is what you should initialize these values to.

The _partition method separates all the points into clusters. It can be very simple since the distance computation is already implemented in Cluster.

Try to finish Part B of ClusterGroup by Monday, November 16.

Part C: Update

The next two methods are used to perform a single step in the k-means algorithm. The _update method involves updating all the clusters, which is easy because the core operation is already implemented in Cluster. The only complication is keeping track of whether all the centroids remained unchanged. One approach is to do something similar to what you did for finding the nearest cluster: keep track of whether a changed centroid has been seen so far.

The step method is simple once you have _update and _partition. If you do it right, it is only two lines.

Try to finish Part C of ClusterGroup by Tuesday, November 19. Notice this is only one day after the previous part. By this point you have completed most of the hard work and the method implementations should actually be getting easier.

Part D: Run

The final method run involves calling step repeatedly. The only issue is that you have to know when to stop. This suggests a while-loop with a certain stopping condition.

This method takes an argument, so you should remember to assert your preconditions. Once you have implemented this method, you are done with the assignment. Update 11/16/14: We forgot to include the precondition for this method. The preconditions is maxstep is an int >= 0.

Testing it Out

Once you complete each part of ClusterGroup, run the unit test. If everything is working, you will get the appropriate message, just like with Cluster.

You can also test this part in the visualizer. When you load a data file, you should be able to run the entire clustering process. You can see the initial clusters once the Clustering initializer is working. Once part C is done, you can seeing the process of refining clusters step by step. You should be able to visually confirm the operation of the algorithm; after each iteration, each point should have the same color as the nearest centroid.

You might find it interesting to try different numbers of clusters for the avian flu datasets, or to run k-means clustering on them several times, and see how the means move around. Note that if you have “too few” clusters, points that seem far apart end up in the same cluster; but if you have “too many” clusters, you get clusters that seem to lie “too close” to each other. For example, with the H4N6 data, sometimes you get more than one cluster for the Alaska locations, which does not seem right; but sometimes you get the Alaska and California points all in the same cluster, which may also not seem right. Cluster analysis can be finicky. But you should often see the algorithm “discovering” clusters that correspond to identifiable geographic regions, which is kind of cool. Do you see any correlation with lakes and waterways?

Finishing the Assignment

Once you have everything working you should go back and make sure that your program meets the class coding conventions. In particular, you should check that the following are all true:

- There are no tabs in the file, only spaces (in Komodo Edit tabs look like arrows if whitespace is visible)

- Functions are each separated by two blank lines.

- Lines are short enough (80 chars) that horizontal scrolling is not necessary.

- The specifications for all of the functions are complete and are docstrings.

- Specifications are immediately after the function header and indented.

At the top of a6.py you should have three single line comments with

(1) the module name, (2) your name(s) and netid(s), as well as the identity of any

other sources (documents or people) that contributed to your solution, and (3) the date you finished the

assignment.

Upload the file a6.py to CMS by the due date: Thursday, November 20th at 11:59 pm.

We do not want any other files, even if you added more tests to a6test.py.