Automatic Bounding of Programmable Shaders

for Efficient Global Illumination

| Edgar Velázquez-Armendáriz | Cornell University |

| Shuang Zhao | Cornell University |

| Miloš Hašan | Cornell University |

| Bruce Walter | Cornell University |

| Kavita Bala | Cornell University |

ACM SIGGRAPH Asia 2009 (December 2009)

Abstract

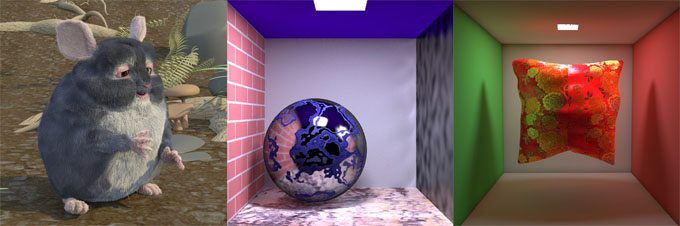

This paper describes a technique to automatically adapt programmable shaders for use in physically-based rendering algorithms. Programmable shading provides great flexibility and power for creating rich local material detail, but only allows the material to be queried in one limited way: point sampling. Physically-based rendering algorithms simulate the complex global flow of light through an environment but rely on higher level information about the material properties, such as importance sampling and bounding, to intelligently solve high dimensional rendering integrals.

We propose using a compiler to automatically generate interval versions of programmable shaders that can be used to provide the higher level query functions needed by physically-based rendering without the need for user intervention or expertise. We demonstrate the use of programmable shaders in two such algorithms, multidimensional lightcuts and photon mapping, for a wide range of scenes including complex geometry, materials and lighting.

Downloads

| Paper | PDF (~2.5MB) |

Acknowledgements

This work was supported by NSF CAREER 0644175, NSF CPA 0811680, NSF CNS 0403340, and grants from Intel Corporation and Microsoft Corporation. The first author was also supported by CONACYT-Mexico 228785. The third author was partly funded by the NVIDIA Fellowship. The Eucalyptus Grove and Kitchen environment maps are courtesy of Paul Debevec (http://www.debevec.org/probes/).