MegaDepth: Learning Single-View Depth Prediction from Internet Photos

Zhengqi Li Noah Snavely

Cornell University/Cornell Tech

In CVPR, 2018

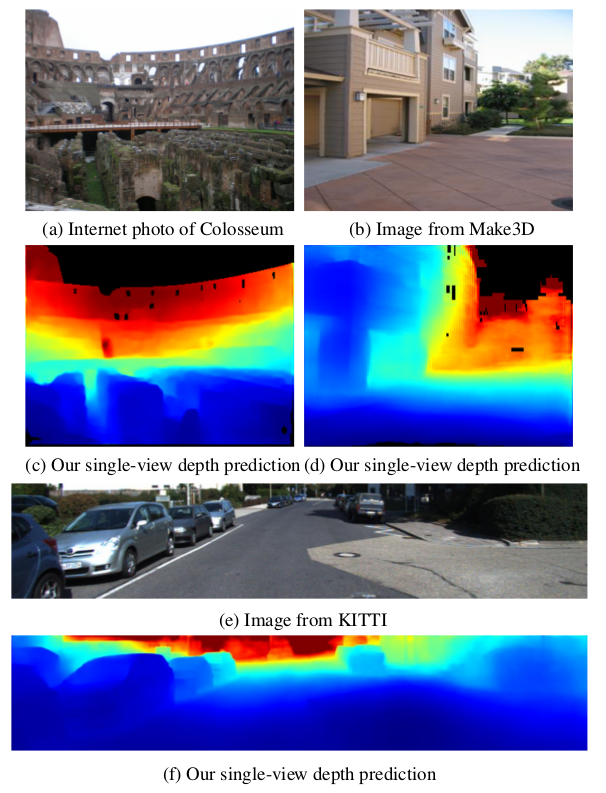

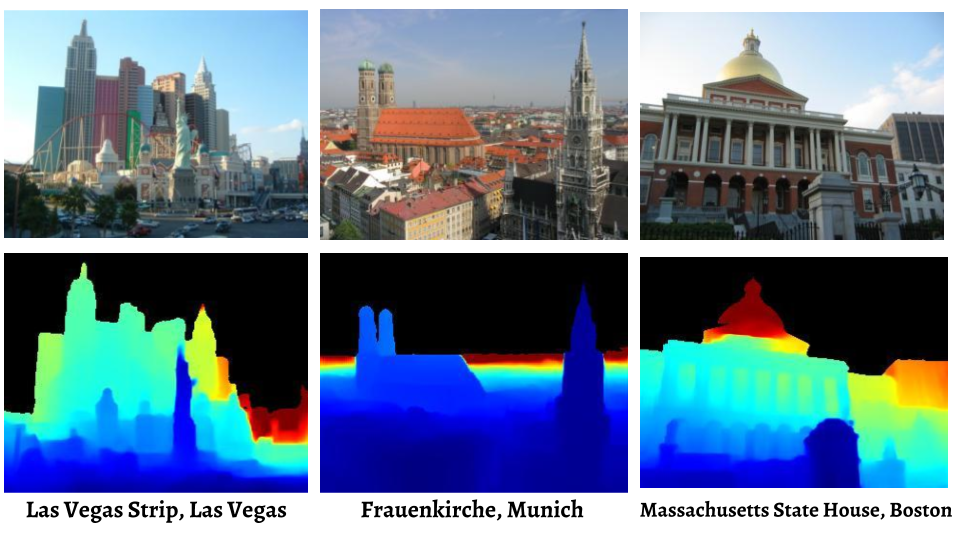

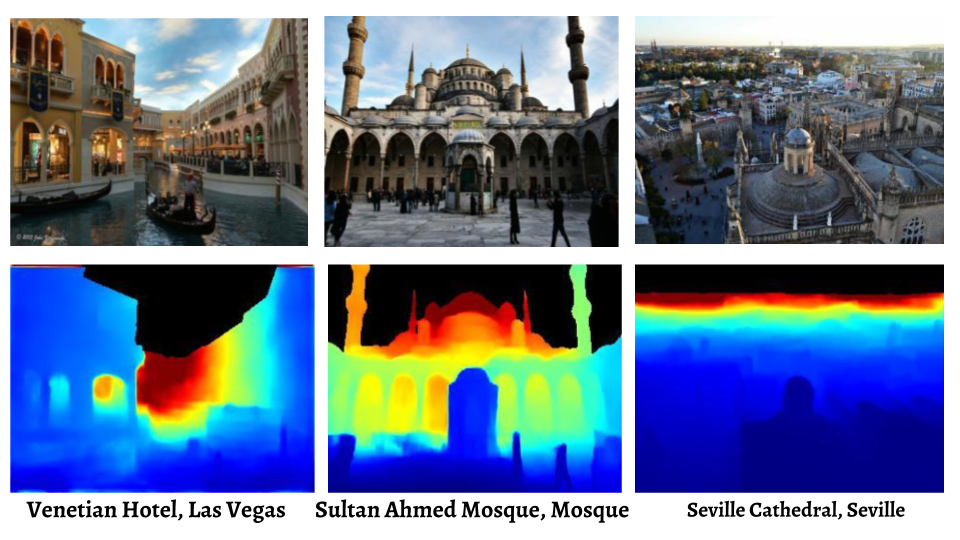

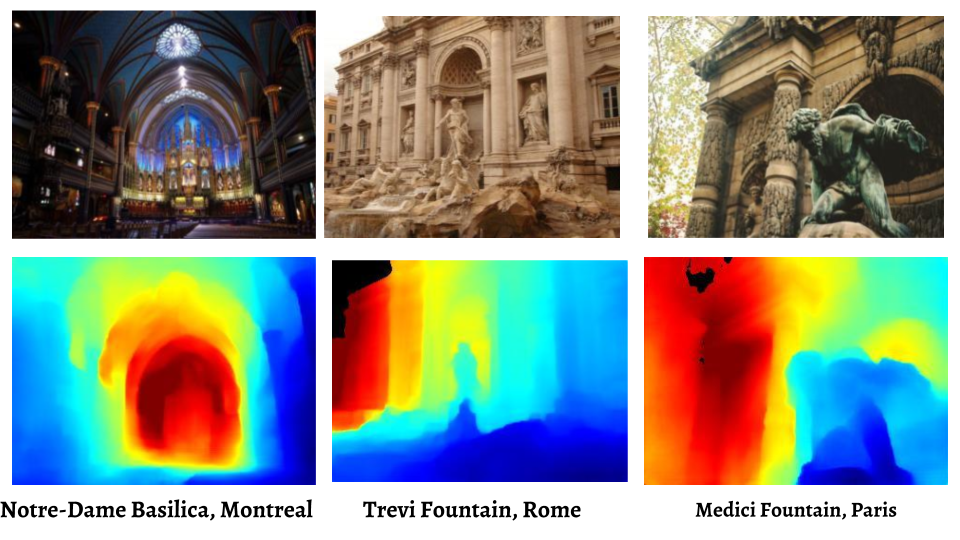

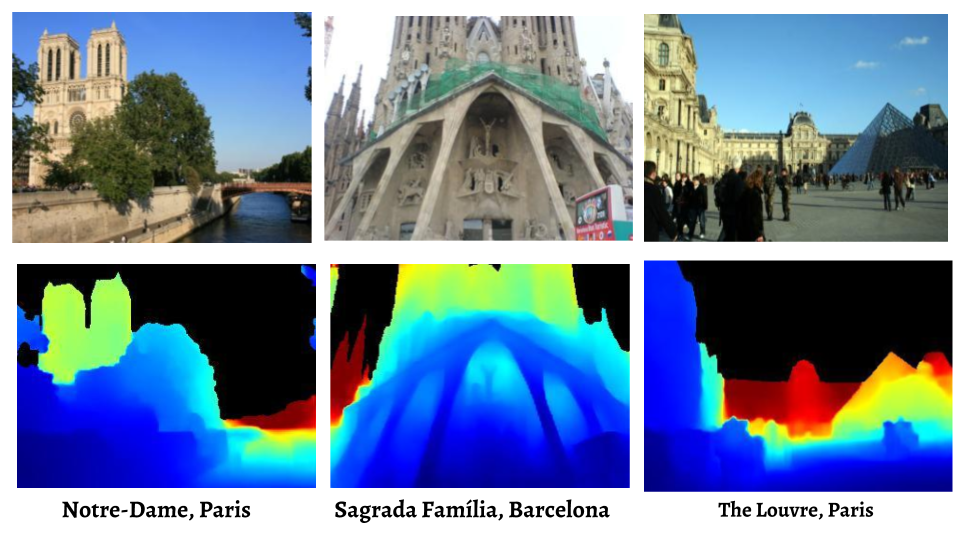

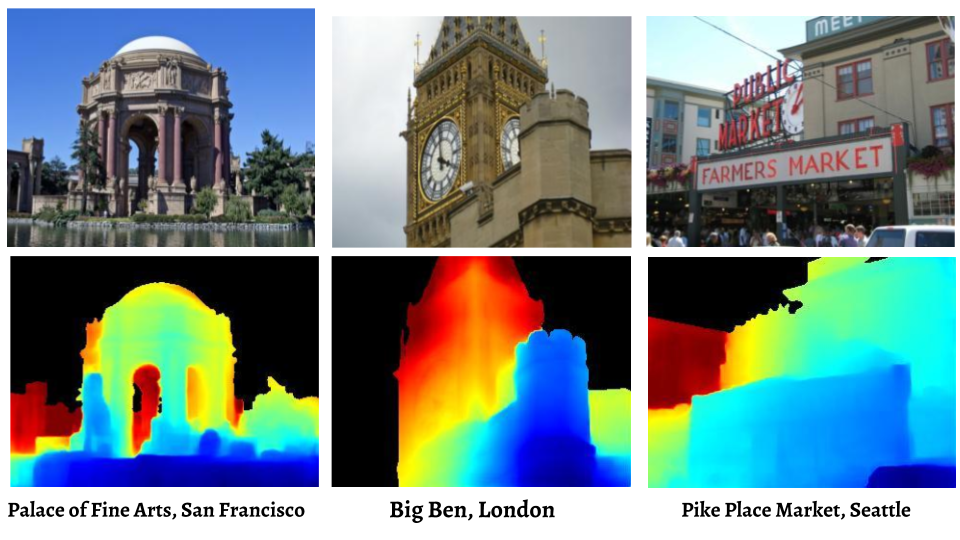

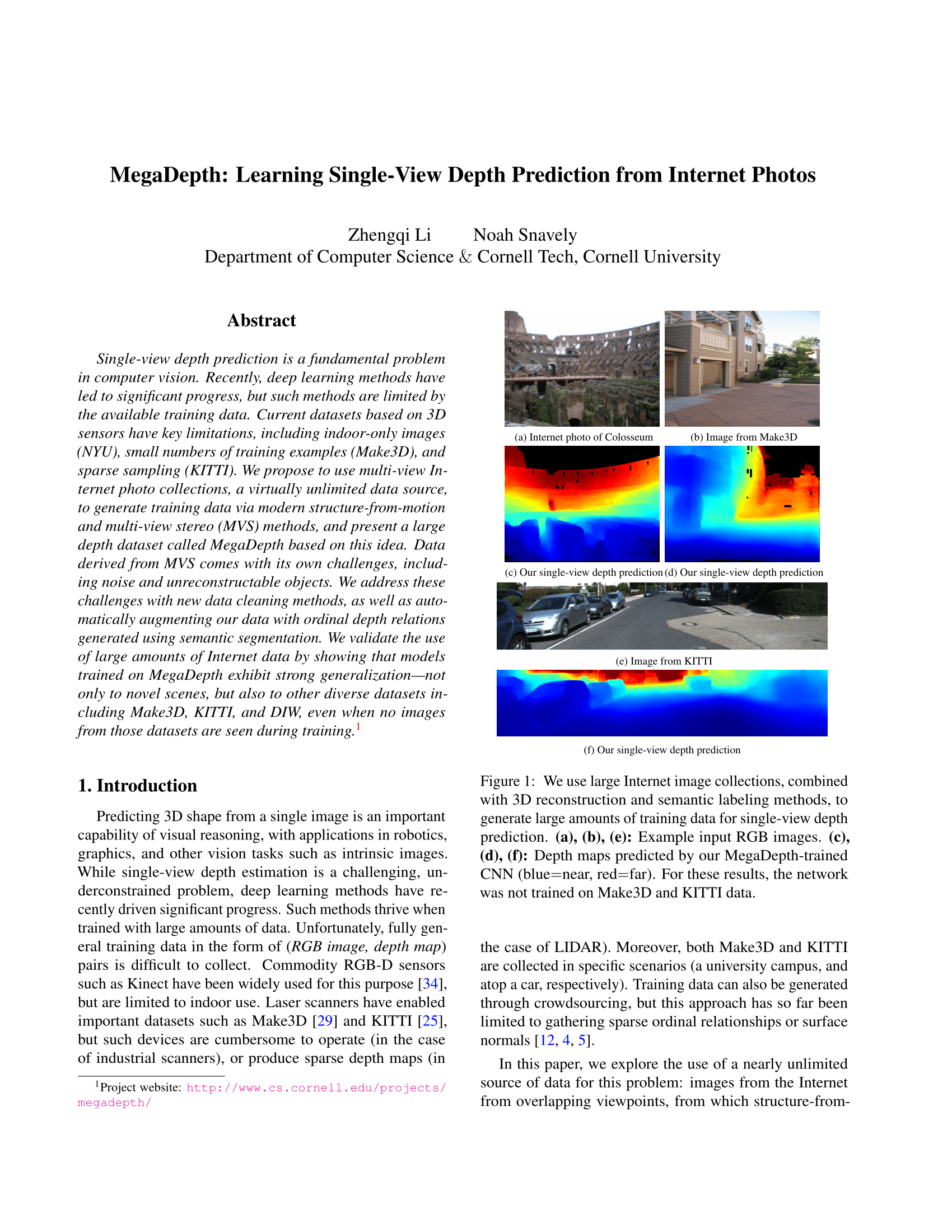

We use large Internet image collections, combined with 3D reconstruction and semantic labeling methods, to generate large amounts of training data for single-view depth prediction. (a), (b), (e): Example input RGB images. (c), (d), (f): Depth maps predicted by our MegaDepth-trained CNN (blue=near, red=far). For these results, the network was not trained on Make3D and KITTI data.

Abstract

Single-view depth prediction is a fundamental problem in computer vision. Recently, deep learning methods have led to significant progress, but such methods are limited by the available training data. Current datasets based on 3D sensors have key limitations, including indoor-only images (NYU), small numbers of training examples (Make3D), and sparse sampling (KITTI). We propose to use multi-view Internet photo collections, a virtually unlimited data source, to generate training data via modern structure-from-motion and multi-view stereo (MVS) methods, and present a large depth dataset called MegaDepth based on this idea. Data derived from MVS comes with its own challenges, including noise and unreconstructable objects. We address these challenges with new data cleaning methods, as well as automatically augmenting our data with ordinal depth relations generated using semantic segmentation. We validate the use of large amounts of Internet data by showing that models trained on MegaDepth exhibit strong generalization---not only to novel scenes, but also to other diverse datasets including Make3D, KITTI, and DIW, even when no images from those datasets are seen during training.

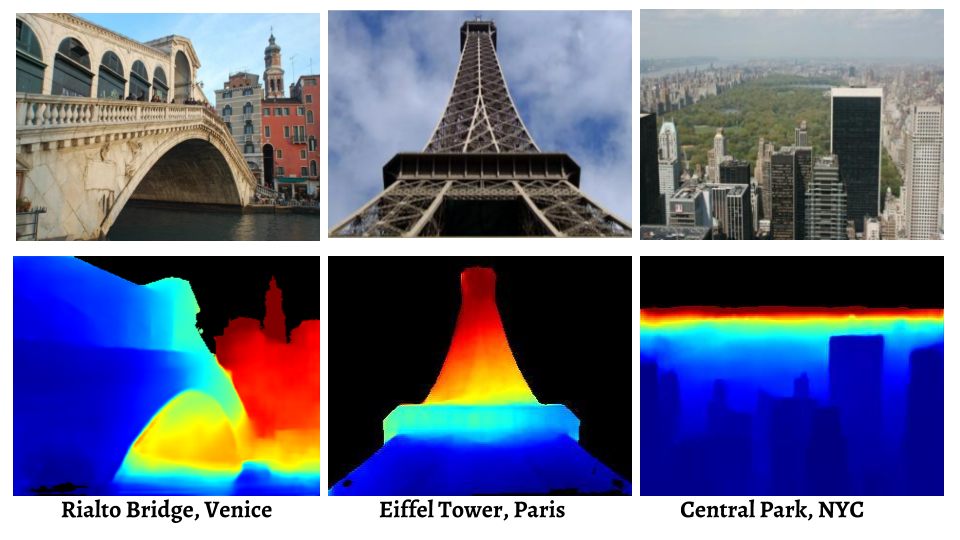

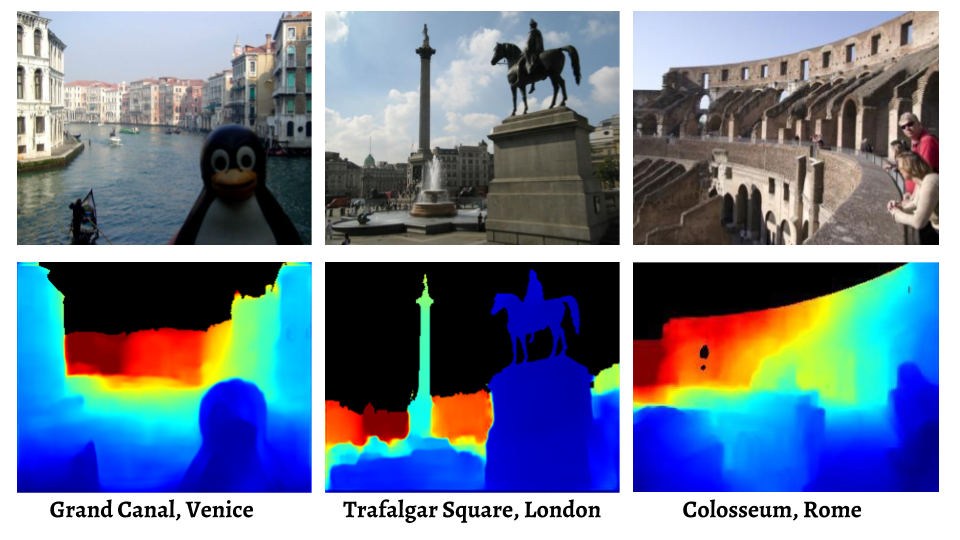

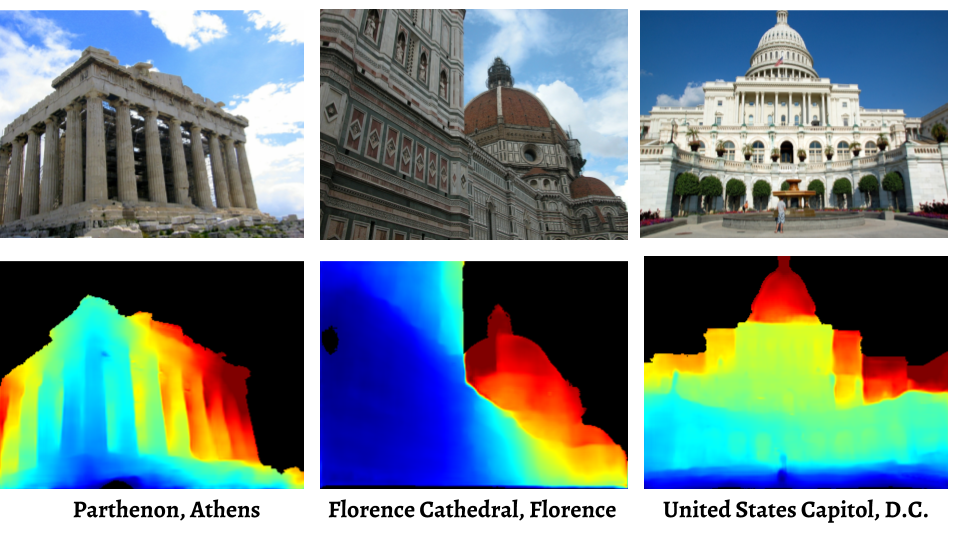

Examples of Single-View Depth Predictions on Internet Photos

[Updated Paper][Updated Supplemental]

Zhengqi Li and Noah Snavely. "MegaDepth: Learning Single-View Depth Prediction from Internet Photos".

@inProceedings{MegaDepthLi18,

title={MegaDepth: Learning Single-View Depth Prediction from Internet Photos},

author={Zhengqi Li and Noah Snavely},

booktitle={Computer Vision and Pattern Recognition (CVPR)},

year={2018}

}

Update (10/23/2018)

We realized there was an issue with a few of the evaluations presented in the original version of the paper. We originally used validation data from other (non-MegaDepth) datasets for early stopping in some cases when evaluating MD-trained models on other datasets. Although it is technically correct in practice for avoiding overfitting due to dataset biases when we do cross-dataset evaluation, for the specific experiments in this paper, we realize it is only fair to compare other models with our models trained and validated on the their own datasets.

Therefore, we redid all the experiments including running our models and other models on all the datasets in order to make sure all predictions are in the same evaluation metrics, and we have updated our paper to show results for models trained and validated on MegaDepth data, as originally intended. We also updated results on MegaDepth test set to make it consistent with released public dataset and models. These updates are reflected from Table 1 to Table 6, and in Figures 7, 8, and 9 (as well as figures in supplementary material). The paper has been updated on arXiv.

In summary, the conclusion of our MegaDepth paper still keep unchanged after these updates.

MegaDepth v1 Dataset

The MegaDepth dataset includes 196 different locations reconstructed from COLMAP SfM/MVS. (Update images/depth maps with original resolutions generated from COLMAP MVS)

MegaDepth v1 SfM models

We also provide SfM models for all the 196 locations around world, and every model includes SIFT features locations, sparse 3D points clouds as well as camera intrinisics/extrinsics in both COLMAP and Bundler format.

MegaDepth demo code for post-processing MVS depths

As requested by several people, we provide a simple demo code for getting MVS depths from COLMAP with significantly less outliers, which can be used for training networks (written in Matlab).

MegaDepth training/validation sets list

MegaDepth test set list

Precomputed spare features for SDR evalution in MegaDepth test set

Code

Simple demo training code

As requested, we provide a simple demo training code. Note that this is not fully working code but should give you basic idea of training the networks on our MegaDepth dataset.

Pretrained Models

If you want to compare your algorithms with ours both numerically and qualitatively in your paper, please consider using these models. See README for more explanation.

Note: for clarification, this model is used for more general purposes. We trained the network using our MegaDepth and DIW dataset and pretrain it on the NYU/DIW pretrained weights from the DIW website. Hence, it may have better performance than what was described in the paper.

License information

The MegaDepth dataset (depth maps, SfM models, etc), is licensed under a Creative Commons Attribution 4.0 International License. The original images come with their own licenses.Acknowledgements

We thank the anonymous reviewers for their valuable comments. This work was funded by the National Science Foundation under grant IIS-1149393.