Photometric Ambient Occlusion

Daniel Hauagge, Scott Wehrwein, Kavita Bala, Noah Snavely

|

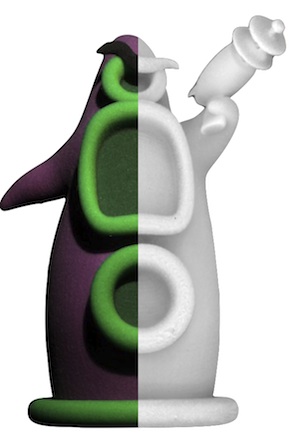

We present a method for computing Ambient Occlusion (AO) for a stack of images of a scene from a fixed viewpoint. Ambient occlusion, a concept common in computer graphics, characterizes the local visibility at a point: it approximates how much light can reach that point from different directions without getting blocked by other geometry. While AO has received surprisingly little attention in vision, we show that it can be approximated using simple, per-pixel statistics over image stacks, based on a simplified image formation model. We use our derived AO measure to compute reflectance and illumination for objects without relying on additional smoothness priors, and demonstrate state-of-the art performance on the MIT Intrinsic Images benchmark. We also demonstrate our method on several synthetic and real scenes, including 3D printed objects with known ground truth geometry. |

Updates

- 2015-10-23: Updated the data archive to include

Tentacle 's ground truth ambient occlusion used in the paper. - 2015-07-26: Extended version of our CVPR paper to come out on special edition of PAMI with best papers of CVPR 2013.

- 2014-10-07: Our follow up to this work, where we explore how the method can be extened to work with outdoor illumination, was presented at BMVC 2014.

- 2013-08-19: Small revisions to Figure 3 of paper to highlight that angles alpha and theta are with respect to the point normal (original version of the paper can be obtained here).

Downloads

BibTeX entry

@inproceedings{hauagge_cvpr2013_photoao,Title = {Photometric Ambient Occlusion},

Author = {Daniel Hauagge and Scott Wehrwein and Kavita Bala and Noah Snavely},

booktitle = {Proceedings of CVPR},

Year = {2013}

}

Acknowledgments.This work was supported in part by the NSF (IIS-0963657, IIS-1149393, and IIS-1111534) and the Intel Science and Technology Visual Computing Center. We also thank the following people for their help and advice: Wenzel Jakob, Sean Bell, Pramook Khungurn, Steve Marschner, and Albert Liu.