CGIntrinsics: Better Intrinsic Image Decomposition through Physically-Based Rendering

Zhengqi Li Noah Snavely

Cornell University/Cornell Tech

In ECCV, 2018

Abstract

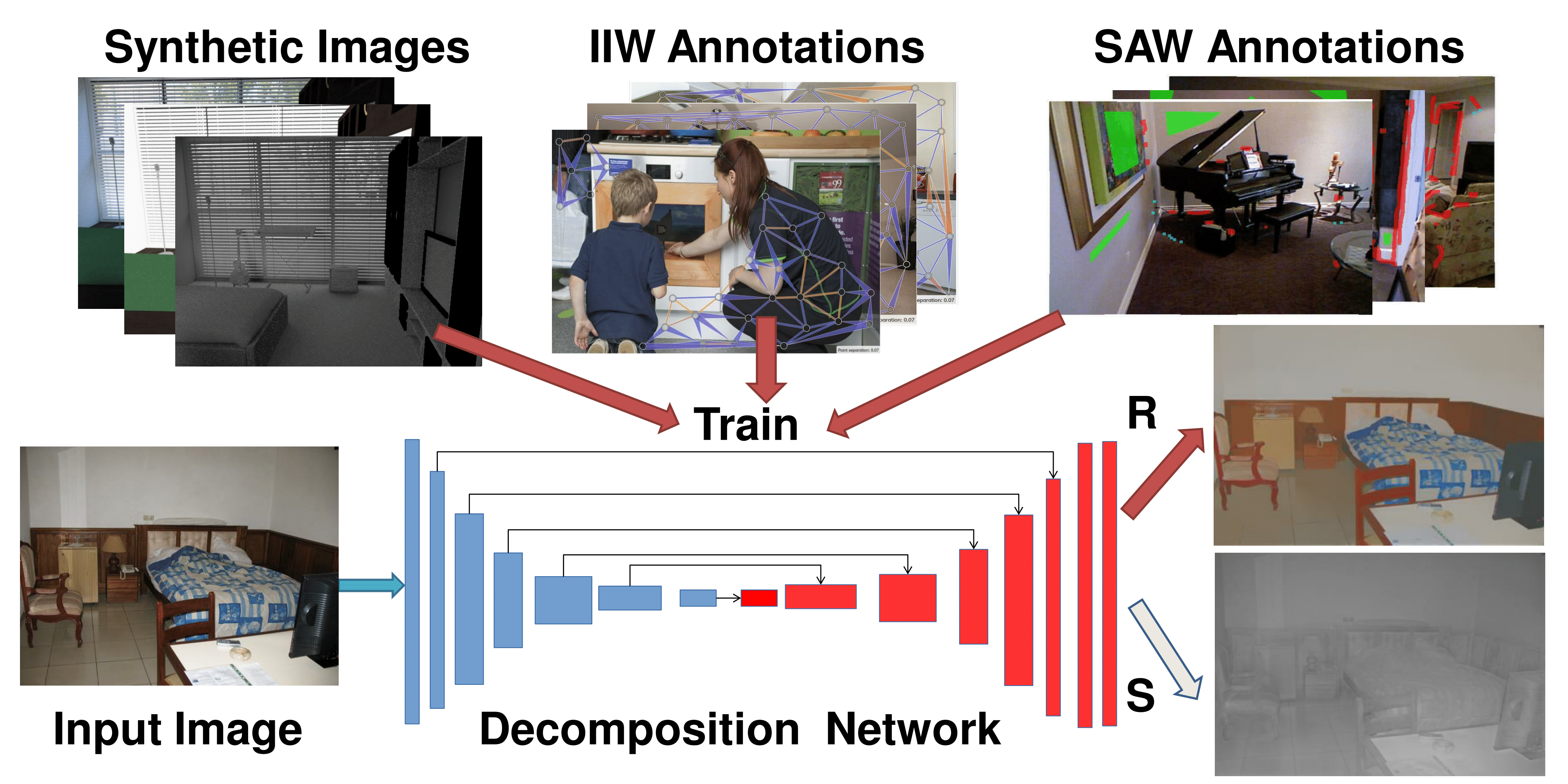

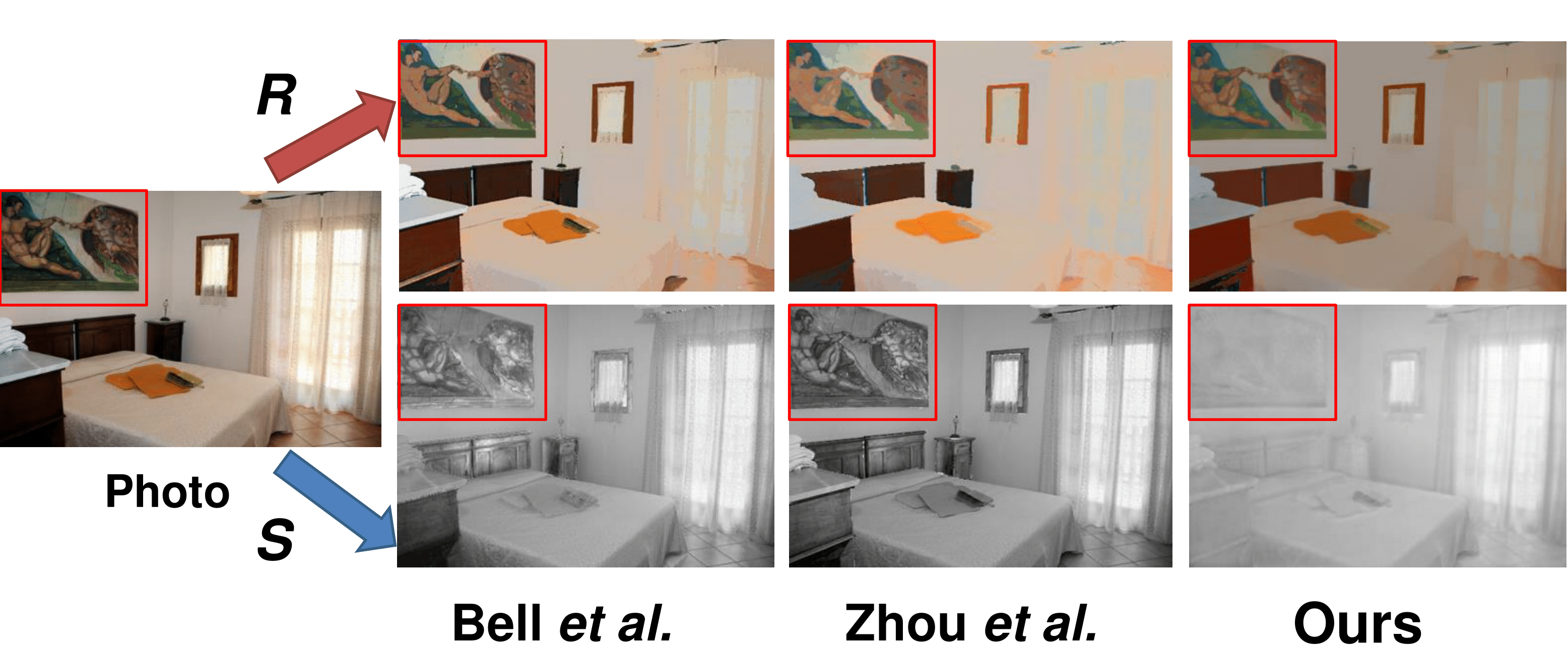

Intrinsic image decomposition is a challenging, long-standing computer vision problem for which ground truth data is very difficult to acquire. We explore the use of synthetic data for training CNN-based intrinsic image decomposition models, then applying these learned models to real-world images. To that end, we present CGIntrinsics , a new, large-scale dataset of physically-based rendered images of scenes with full ground truth decompositions. The rendering process we use is carefully designed to yield high-quality, realistic images, which we find to be crucial for this problem domain. We also propose a new end-to-end training method that learns better decompositions by leveraging CGIntrinsics, and optionally IIW and SAW, two recent datasets of sparse annotations on real world images. Surprisingly, we find that a decomposition network trained solely on our synthetic data outperforms the state-of-the-art on both IIW and SAW, and performance improves even further when IIW and SAW data is added during training. Our work demonstrates the suprising effectiveness of carefully-rendered synthetic data for the intrinsic images task.

[Paper] [Supplemental]

Zhengqi Li and Noah Snavely. "CGIntrinsics: Better Intrinsic Image Decomposition through Physically-Based Rendering".

@inProceedings{li2018cgintrinsics,

title={CGIntrinsics: Better Intrinsic Image Decomposition through Physically-Based Rendering},

author={Zhengqi Li and Noah Snavely},

booktitle={European Conference on Computer Vision (ECCV)},

year={2018}

}

Dataset

The CGIntrinsics dataset includes > 20,000 high quality rendered images from the Mitsuba Renderer:

We also provide extra training data (such as our precomputed dense pairwise IIW judgements) that we use for training with IIW and SAW data jointly with CGIntrinsics. Note that we do not provide original images and labels; those could be downloaded from the original IIW and SAW project websites.

Pretrained Models

This model corresponds to the full model ("Ours (CGI+IIW+SAW)") trained with CGIntrisics, IIW, and SAW data, as described in the paper.

This model corresponds to the model trained on CGIntrisics dataset ("Ours (CGI)"), as described in the paper.

* To get evalution results on IIW test set, download IIW dataset and run 'compute_iiw_whdr.py', you need to change judgement_path in this script to fit to your IIW data path, see readme for more details.

* To get evalution results on SAW test set, download SAW dataset and run 'compute_saw_ap.py'. You need modify 'full_root' in this script and to point to the SAW directory you download, see readme for more details

Training/Evaluation Code

Tonemapper Rendering Code

Acknowledgements

We thank Jingguang Zhou for his help with data generation. We also thank the anonymous reviewers for their valuable comments. This work was funded by the National Science Foundation through grant IIS-1149393, and by a grant from Schmidt Sciences.