Learning Intrinsic Image Decomposition from Watching the World

Zhengqi Li Noah Snavely

Cornell University/Cornell Tech

In CVPR, 2018 (Spotlight Oral)

Abstract

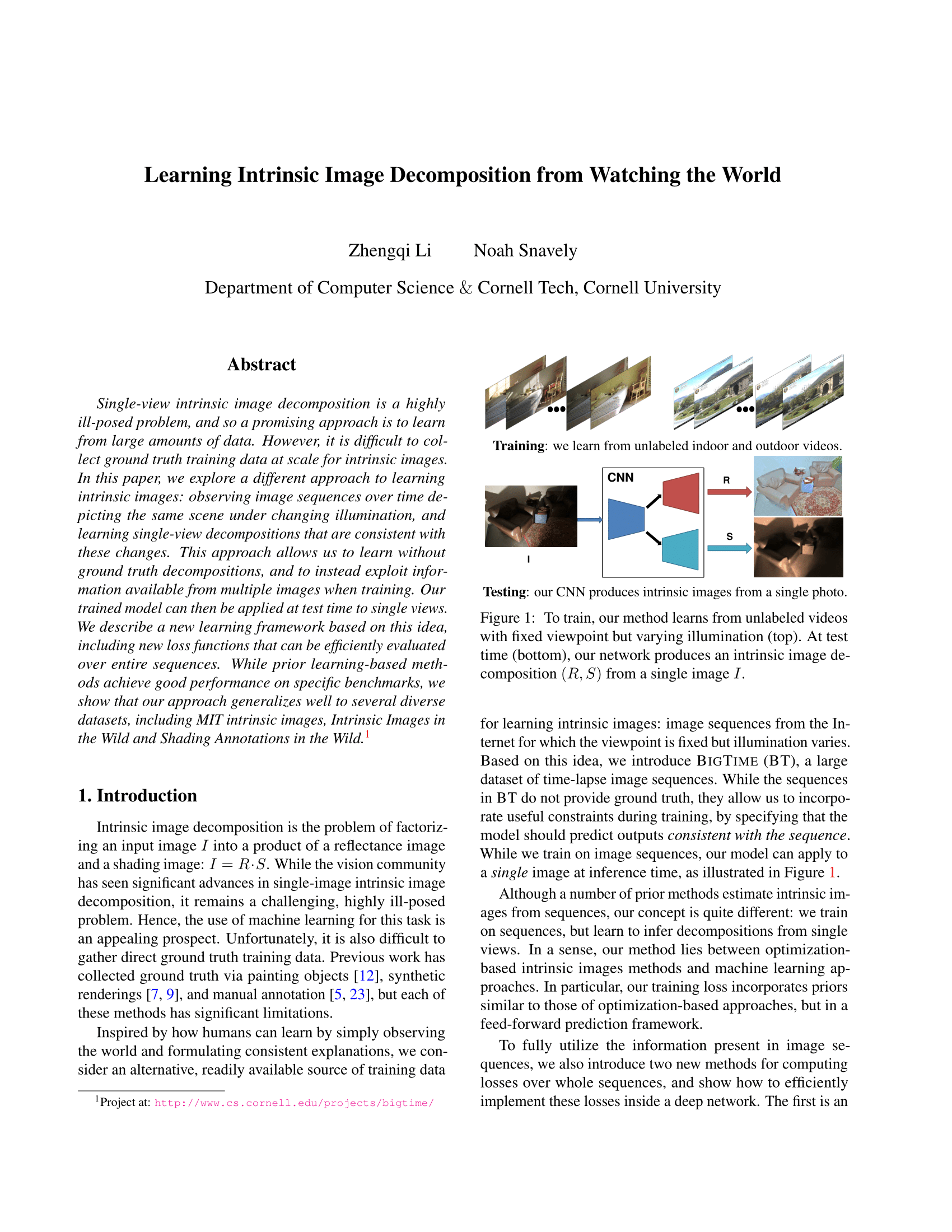

Single-view intrinsic image decomposition is a highly ill-posed problem, making learning from large amounts of data an attractive approach. However, it is difficult to collect ground truth training data at scale for intrinsic images. In this paper, we explore a different approach to learning intrinsic images: observing image sequences over time depicting the same scene under changing illumination, and learning single-view decompositions that are consistent with these changes. This approach allows us to learn without ground truth decompositions, and instead to exploit information available from multiple images. Our trained model can then be applied at test time to single views. We describe a new learning framework based on this idea, including new loss functions that can be efficiently evaluated over entire sequences. While prior learning-based intrinsic image methods achieve good performance on specific benchmarks, we show that our approach generalizes well to several diverse datasets, including MIT intrinsic images, Intrinsic Images in the Wild and Shading Annotations in the Wild.

[Paper] [Supplemental]

Zhengqi Li and Noah Snavely. "Learning Intrinsic Image Decomposition from Watching the World".

@inProceedings{BigTimeLi18,

title={Learning Intrinsic Image Decomposition from Watching the World},

author={Zhengqi Li and Noah Snavely},

booktitle={Computer Vision and Pattern Recognition (CVPR)},

year={2018}

}

Dataset

The BigTime dataset includes > 200 timelapse image sequences we collected from Internet.

Pretrained Model

* To get evalution results on IIW test set, download IIW dataset and run 'compute_iiw_whdr.py', you need to change judgement_path in this script to fit to your IIW data path, see readme for more details.

* To get evalution results on SAW test set, download SAW dataset and run 'compute_saw_ap.py'. You need modify 'full_root' in this script and to point to the SAW directory you download, see readme for more details

Training/Evaluation Code

Acknowledgements

We thank Jingguang Zhou for his help with data collection and running evaluations. We also thank the anonymous reviewers for their valuable comments. This work was funded by the National Science Foundation through grant IIS-1149393, and by a grant from Schmidt Sciences.