|

|||

|

Rakesh Kashyap (nk358@cornell.edu) Darshan Nagarajappa (dn226@cornell.edu) |

|

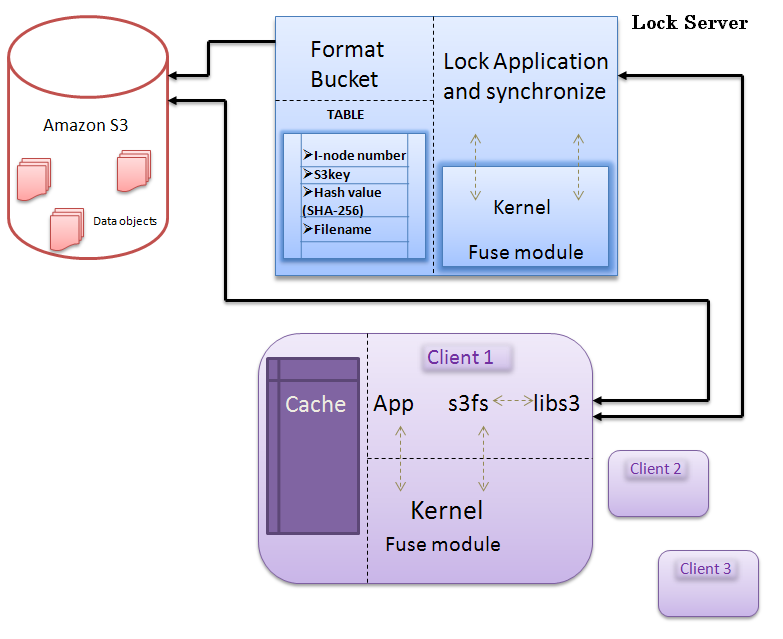

1. Abstract HPUFS is a system designed for high performance in Network File System by incorporating File caching and File locking. The project also involves the implementation of Automatic file synchronization tool which is used for backing up the data in the local systems to the remote Storage Server. . HPUFS is also designed to support Low Bandwidth Networks. The paper describes the initial design and expected use. A number of programs have implemented portable network file systems. However, they have been plagued by low performance. HPUFS is implemented using asynchronous APIs (libasync) which can improve the performance. 2. Introduction High Performance User Space Network File system (HPUFS) provides transparent remote access to files saved in remote data storage (S3). In this paper we describe the fundamental concepts implemented in High Performance User Space Network File system. We present the architecture, design and implementation of the cache layer in HPUFS. We also detail the implementation of major features such as file-indexing, caching, locking and a file synchronization tool. This tool is used for backing up the data present in the local system at a user specified location. The basic formulation of a network file system is designed to meet these need is presented here. The basic structure of the Network File System is independent of storage system considerations. The user is only aware of the symbolic addresses; all the physical addressing of a storage device is done by the HPUFS which is hidden from the user. HPUFS provides a capability of scaling easily to handle large number of clients accessing the system in parallel. Over slower, wide area networks, data transfers are very slow and can cause unacceptable delays. Therefore, to address these issues, HPUFS provides file caching. To reduce the network bandwidth requirements, HPUFS exploits the cross-file-similarities. |

|

| HPUFS divides the files into multiple blocks. Modification of any file by the application may contain inter-block similarities compared to the previous unmodified version. Any concatenation and copying of files also leads to significant duplication of contents. When transferring a file between the client and server, HPUFS identifies chunks of data that the storage server already has and avoids transmitting redundant data over the network. HPUFS supports multiple clients to access the file system. It avoids the problem of conflicts when multiple clients are accessing the same file using Locking functionality. It provides consistency. Files are copied safely back to the server once the file is closed or lock is released. HPUFS also allows a large number of clients to access the read only data securely. Read only data can have high performance and availability. In many cases, clients widely cache such data to improve performance and availability. The purpose of the lock service is to allow its clients to synchronize their activities and to agree on basic information about their environment. The primary goals include reliability and easy-to-understand semantics. HPUFS provides whole file reads and writes augmented with advisory locks. The protocol between the client and server consists of only two remote procedure calls: one to fetch the data and other to put the data back to the storage server. In our current implementation, we are assuming that the storage server is stateless. So, the complete implementation is done at the client side. | `` | |

|

3. Design And Implementation |

|

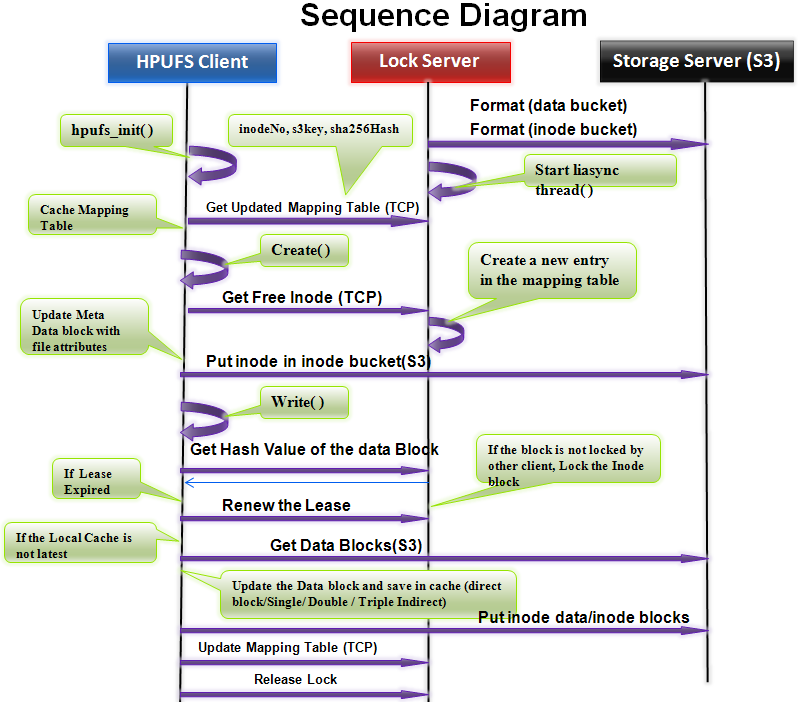

3.1 Asynchronous libasync Since HPUFS is a network file system, it should have facility to serve multiple clients simultaneously. In order to provide asynchronous behavior between multiple clients accessing the same file system, asynchronous I/O library, Libasync is used. This is an essential feature for managing simultaneous reads of the same file. But in case of writes to a same file, It is synchronized using locking service, where each client is not blocked for unlimited time but instead made to wait (tunable fixed duration of time. Hence, the reader-writer problem of synchronizing is much effective and realistically handled in the presence of libasync and lock server. |

|||

|

3.2 File Blocks

Implementation of Blocks is similar to that of blocks in UNIX file system; Indexed allocation scheme supporting single indirect, double indirect and triple indirect blocks. Size of the index/block is fixed to 4KB. Inode blocks are saved in the inode bucket and data blocks are saved in the data bucket. Hash value of the data block is computed using SHA-256 Hash function. Hash values are used for comparing two different versions of a file. |

||||

|

3.3 Caching . Basic Functionalities provided by the file system are, creating a new file, append any size of data to the existing file at specified offset, copying contents one file to another and deleting file. In any of these activities, caching becomes critical to boost the performance and increase responsiveness. Cache implementation involves a configuration parameter which specifies the maximum number of bytes it needs to cache. The maximum number of bytes depends on the storage server.Any request to read file data would result in a call from the caching layer down to the back-end storage server to fetch the data. |

| Then the caching layer would cache the data on the local file system so that the subsequent requests of the same data would be served at local file system. Any request to write file data would be satisfied by the caching layer by immediately writing data on to the cache and a separate flushing thread would flush the modified data down to the back-end storage system (s3 storage) at a later time (when the file is closed or flushed). The basic implementation would be to have an inode cache, which consists of complete list of inodes in the entire file system and a data cache which would be the list of data blocks that were recently used (active set). The data cache consists of data block of the files which are least recently used (LRU) and if a new data block of file is expected to arrive, then the oldest used block is replaced. Any read or write requests from the client will migrate through the data cache, which allows the requests to be cached for faster access (rather than going back out to the physical device S3). |

|

3.4 Locking Service Since S3 does not provide any synchronization for reader-writer problem involving multiple clients, it is handled by the Lock Server. |

|

|

|

3.5 Automatic File Synchronization Tool Automatic File Synchronization tool provides the functionality for user to specify the directory which he wants to back up to a storage server. Any changes to the directory or sub directories or files inside the directory will trigger a kernel event and once the application traps the kernel signal it automatically saves the copy of the data to the storage server. Since the File system is implemented using unix style multilevel blocks the implementation of backing up a directory is simple and effecient. This is because, if a user changes fewer data blocks of the entire file, then only those blocks whch are changed would be backed up in the Storage Server (Amazon S3 data bucket). Modified blocks can be identified by recalculating the new hash value of the data blocks and comparing it with the old hash value. This makes optimum usage of network by avoiding the whole file (which may have several unchanged block to be backed up) to be copied back to S3. Automatic file synchronization tool is an installation software. Once the user installs the tool and specifies the directory which he is interested in backing up, the selected directory is registered with the application. If the data is destroyed, user can always retrieve the backup data saved in the storage server. |

||

|

4. Downloads To Download the "HPUFS Paper (PDF)" or "Presentation" or "Source Code" Click on Corresponding Dowload button

|

5. Contact InformationIf you have any problem in installing or running our HPUFS, please do mail to Rakesh " nk358@cornell.edu " Or Darshan"dn226@cornell.edu" |

|

|

||

| copyright (c) 2009 Cornell University Last Modified 8th May 2009 | ||