GridControl is an umbrella name for a DOE/ARPAe-funded effort that unites GridCloud, a new platform jointly created by Cornell University and Washington State University for high assurance cloud computing, with a power grid simulation and state estimator. The resulting platform represents a powerful new solution for smart grid monitoring & control.

Project Overview

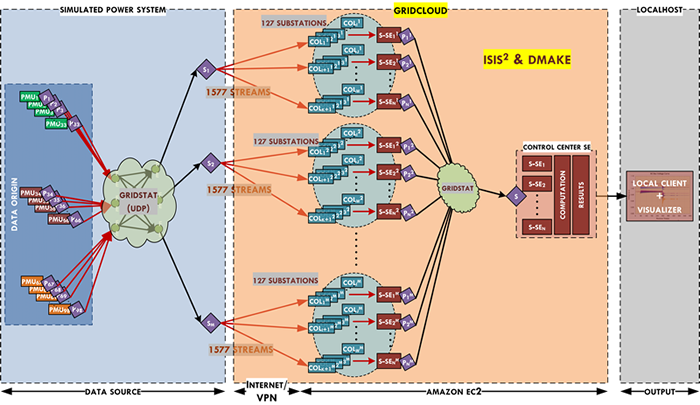

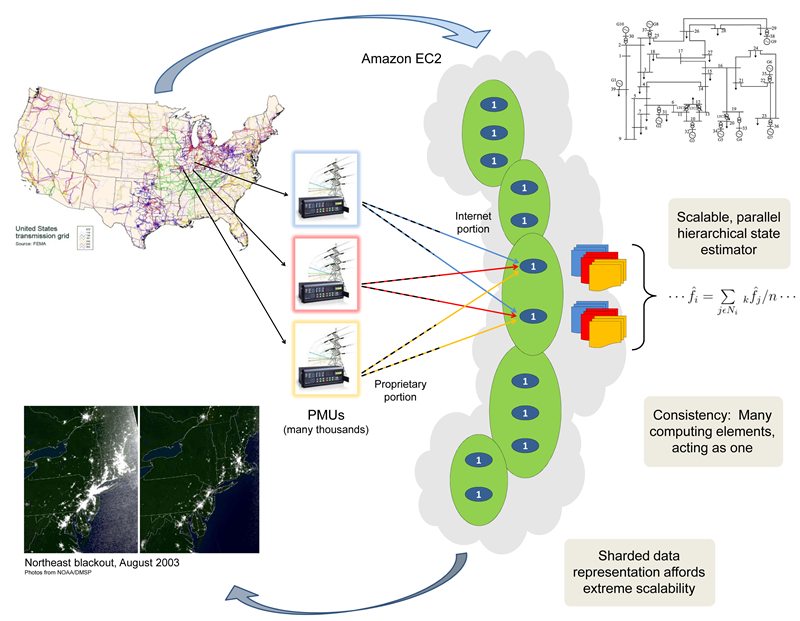

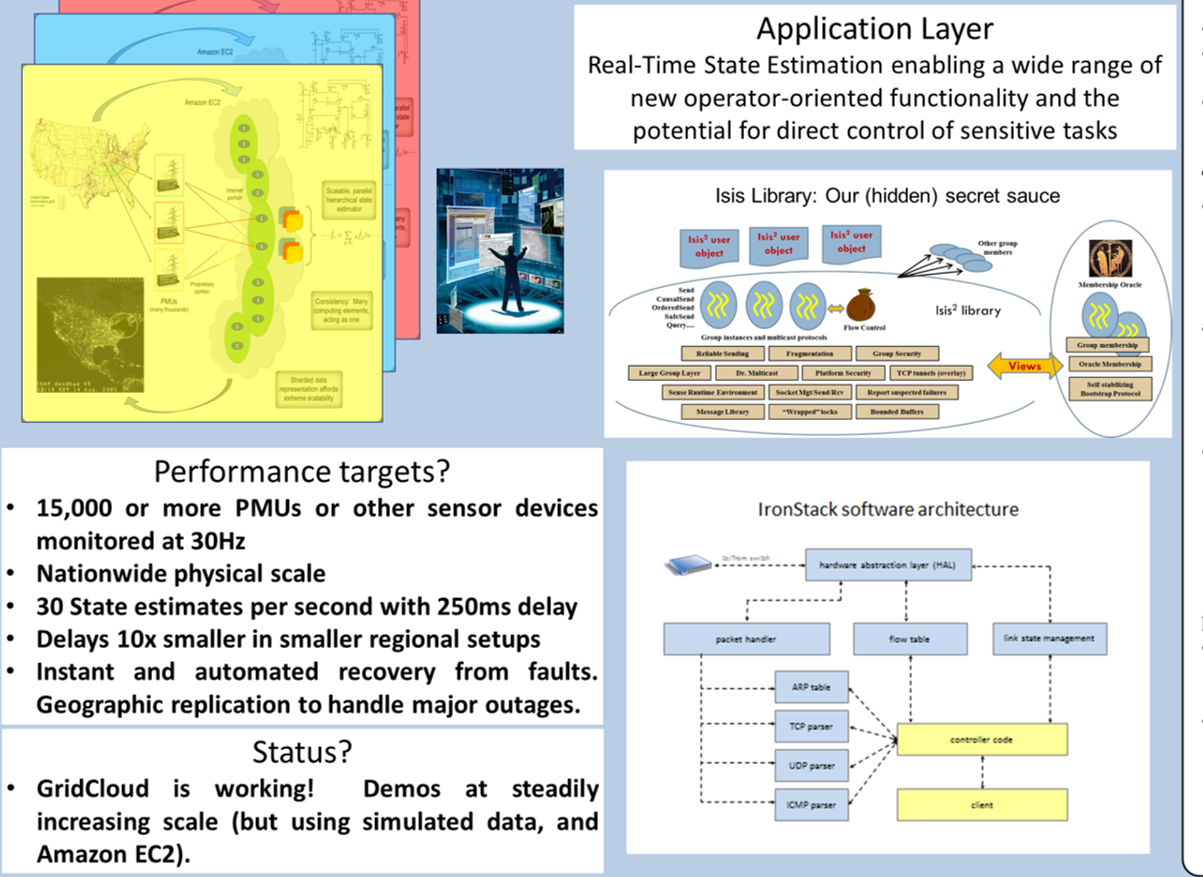

The Cornell University and Washington State University GridControl research yielded a platform that we refer to as the the GridCloud system. GridCloud functions much like an operating system for hosting smart grid applications on cloud systems. Our initial focus has been on Amazon's AWS (both the public AWS and Amazon's government version), which we augment with additional tools to support 24x7 mission-critical application availability. GridCloud is composed of a set of standalone components that include the CloudMake management tool, the GridCloud Collaboration Tool, the IronStack SDN network controller, and the Freeze-Frame File System. These come together in the GridCloud platform. Our preliminary work has ported a grid simulation system and a real-time linear state estimator onto the platform, which we then evaluated by transmitting simulated data into it much as real PMUs would and then reconstructing the corresponding grid state. We achieved a latency of 100 to 125ms even with injected component failures, scheduling delays and Internet network delays. GridCloud is an open source platform, available under 3-BSD free licensing.

Elements of the GridCloud Solution

The transformational energy management and renewable power deployment capabilities needed to create the next generation of the power grid share a common weakness: the most exciting concepts require a highly scalable and secure computing infrastructure that does not currently exist. Today the best match is with the “cloud computing” model, but existing cloud computing platforms lack the level of reliability and security needed to operate a nationally critical infrastructure.

One option is for each application developer working on the smart grid to set out to solve such problems on their own. Such an approach cannot scale: the underlying technical issues are daunting and our own research on such systems, over a 25 year period, convinces us that without a reasonably standard approach, few developers would arrive at robust, self-managed solutions. Yet if only experts can create smart-grid software, then very few smart-grid applications could be deployed.

We created GridCloud to address this issue. In the system, Cornell University and Washington State University have brought together state of the art solutions, yielding a platform that embodies powerful, comprehensive responses to the key requirements. Using GridCloud, developers can slash the time and difficulty required to prototype and demonstrate new smart-grid monitoring and control paradigms, and achieve high confidence in the resiliency and security of their solutions.

GridCloud diagram

We view GridCloud not as a turn-key platform for PMU-based power grid state estimation, but rather as a flexible and extensible tool -- a building block that simplifies the otherwise challenging problems that dominate in cloud setting. Thus for us, state estimation is an important capability but also is offered as a demonstration: the first of many applications hosted on the platform. GridCloud integrates and standardizes tasks that any system of this kind must address, such as reliable data collection with good realtime properties and security guarantees. The resulting system looks as familiar as possible: as much as we were able to, we adopted familiar, standard APIs. As a result, GridCloud offers an easily customized platform that can be extended by application development teams seeking to deploy new capabilities that leverage the framework.

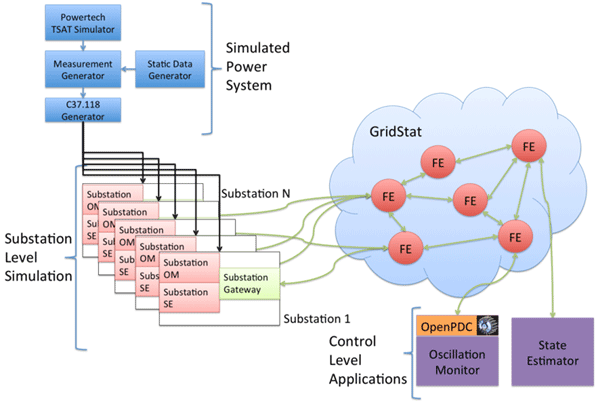

With funding from the ARPAe GridControl project in the GENI program, we also undertook a comprehensive experimental platform validation. Although academic researchers cannot obtain direct access to the real time network models and power grid state actually used in the bulk power grid, we created a high fidelity simulation, setting up our system in a realistic configuration that uses a network model drawn from public IEEE standard test cases based on the Western Interconnect, and then constructs simulated phasor measurement unit (PMU) data for this model under both steady state and contingency scenarios. We replay this data in realtime from clock-synchronized "data source" nodes situated in the Internet, and replay the PMU data streams in duplicate or triplicate to overcome network delays. To explore scale we over-instrument the WEC model, simulating a situation in which each bus in the 198-bus WEC scenario has as many as 30 PMUs deployed on it. The resulting 18,000-feed configuration allows us to demonstrate the costs, delays and reliability of GridCloud, and to characterize its behavior under the same conditions that would be seen if it were being used to monitor, or control, the entire US Northeast bulk power grid. We can even simulate PMU failures or inconsistencies between the EMS report of network status and PMU data, which permits us to techniques for localizing the malfunctioning element.

GridCloud is is made up of:

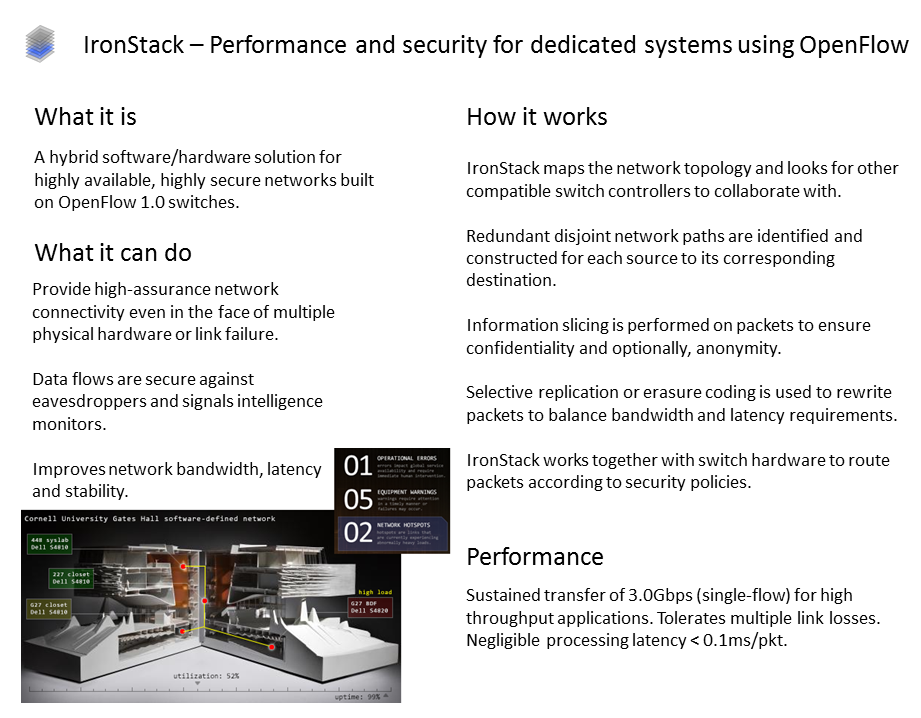

- IronStack, a novel network management tool that can help grid operators build and manage the dedicated network structures needed to link PMUs and other sensors to the hosted monitoring system, and to connect the monitoring infrastructure to actuators. IronStack is optional, but represents an answer to the daunting communication network management tasks that challenge today's power grid operators.

- CloudMake, a system management tool that runs on the cloud platform and manages both GridCloud itself and the various smart-grid applications deployed by users. Standard AWS tools will automatically restart applications but offer little help in dynamic configuration. In contrast CloudMake uses a constraint solver to dynamically optimize application layout and automate recovery after disruptive failures.

- The GridCloud data collection infrastructure captures incoming PMU data and PDC data streams, as well as EMS data associated with network model updates and other SCADA data sources. The data collector writes all received data into files to create a historical record and also relays it to the GridCloud state estimation subsystem. This system automatically establishes secure, replicated connections and will automatically reestablish connectivity if disruption occurs. As noted earlier, redundant data collection is employed to overcome network timing issues and ensure that critical data will still flow into the system even if some network links fail.

- The Freeze Frame File System a new file system for secure, strongly consistent real-time mirrored data sharing. FFFS holds the data recorded by the GridCloud data collectors, and supports rapid searches for old system states to enable a new kind of grid computation that can identify trends over time. One obvious use is to help understand why an instability occurred and how it evolved over time.

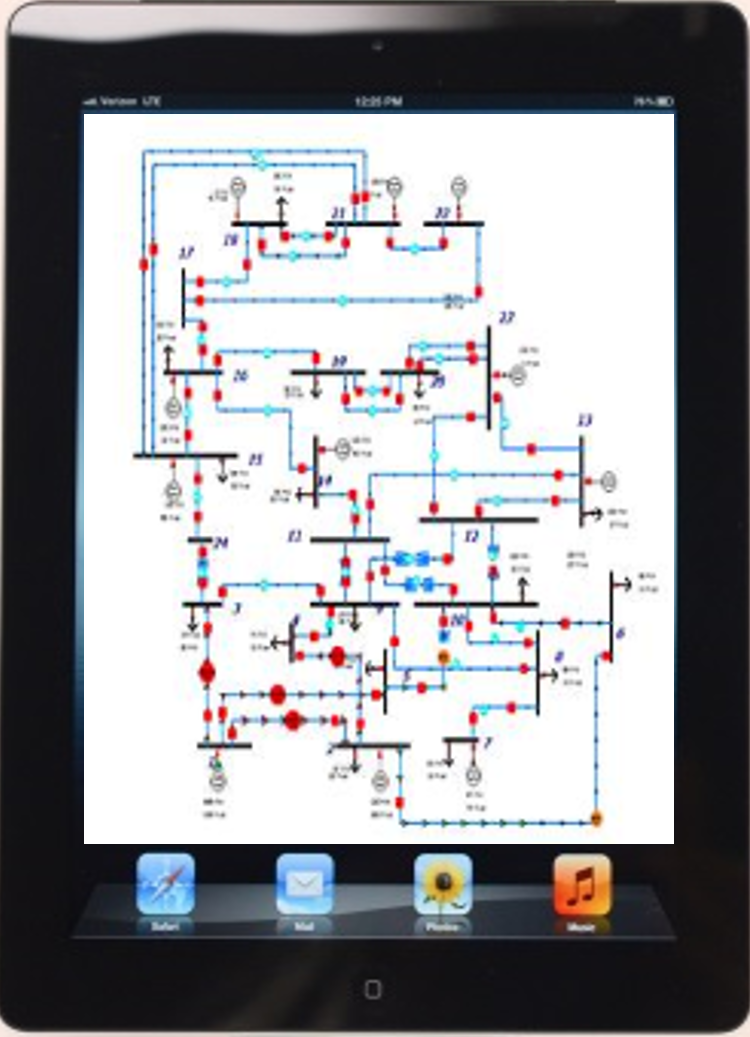

- The GridCloud Collaboration tool offers an iPad-like model to support quick drag-and-drop application creation for sharing data and collaborating securely to solve problems that require cooperation across a wide region.

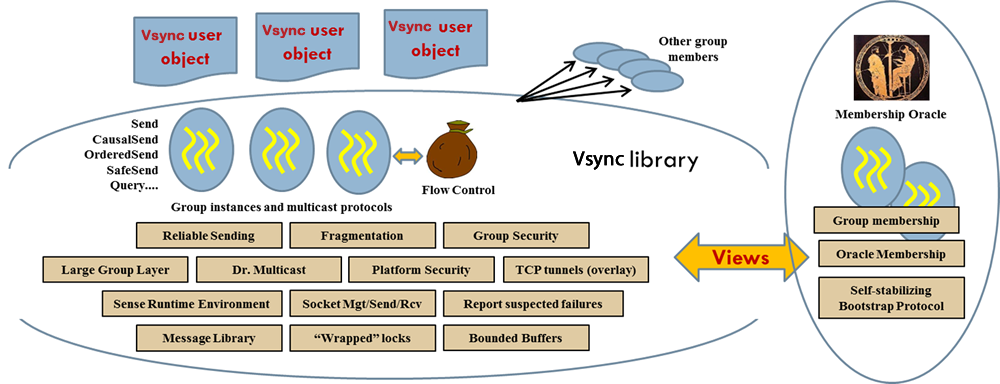

- Cornell's Vsync software library1 is used to create fault-tolerant, secure distributed cloud computing solutions. Vsync is employed internally by CloudMake, FFFS and the collaboration tool, but exists at a lower level than the GridCloud components on which we focus here: this is a tool for developers of new applications, whereas the higher level GridCloud solutions generally can be used with existing code and require no new programming.

All of these components are available from our download site as open-source technologies, under a standard 3-clause free BSD licensing regime.

Notice that the primary GridCloud applications do not include any power systems applications. This reflects our view that a platform is like an operating system, on which applications can be launched. However, as part of our project, we ported two important applications into the system:

- The GridCloud simulator, created by Washington State University, to simulate power grid state and data flows, and

- The Washington State University Linear State Estimator, which reconstructs a network state from realtime data flows and displays the resulting data graphically while also saving it into files for archival purposes. Internally, the LSE actually combines a version of OpenPDC ported to run in GridCloud, the WSU GridStat data bus, the LSE algorithm itself, and a data visualization and archiving tool.

GridCloud thus incorporates a number of substantial technologies, presenting them as a single solution that incorporates the elements required to make sure the platform is easily useable in realistic deployment scenarios.

GridControl, our DOE funding source, enabled us to create GridCloud. The GridControl project also defined a series of experiments which enabled us to fully qualifiy performance, scalability and other considerations.

GridCloud

Details: IronStack

Summary of IronStack.

IronStack is an optimal component of GridCloud, aimed at the communication network that connects the PMU and PDC devices to GridCloud's cloud-hosted data center. Some utilities have in-house solutions, and would not need IronStack, but for those faced with creating such a system or desiring to upgrade a balky and idiosyncratic one, IronStack could be a good option. Developer Z. Teo has focused on creating an open source preliminary version for GridCloud, but is planning to spin off a commercialized version soon, with 24x7 support and deployment help.

IronStack focuses on the ways that power systems sensor technologies connect to services running on clusters or in cloud-styled data centers, and connects those data centers back to actuators that might control devices in the power grid. The data center could be a GridCloud instance, but nothing about the solution is specific to power grid uses. IronStack could thus be employed stand-alone or in combination with other kinds of systems.

The core goals of the IronStack layer are to offer a high-assurance replication and coordination technology for communication-network management and digital data routing. This is needed because many smart grid applications center on capturing data from sensors deployed over a wide area under challenging conditions, then computing control actions centrally, and then relaying the actions to the actuators that will carry them out. Power grid operators are not networking specialists, hence owning and operating a dedicated network that must run even during punishing storms, and do so largely unattended, is a major cost.

IronStack seeks to provide a seamless end-user experience in which the administration of the network is primarily through drag-and-drop actions carried out on system schematics that support simple physical intuition: "We are moving this server from that room to this other location; please adjust the network firewall rules appropriately", or "Make a 3-redundant connection from this PMU to that data collector in GridCloud." The system automatically uses redundant network routes to avoid disruption if an Internet delay occurs, can encrypt data for security, and will assist untrained network technicians in identifying damaged hardware and repairing it, without them needing to get an IT degree to understand the system.

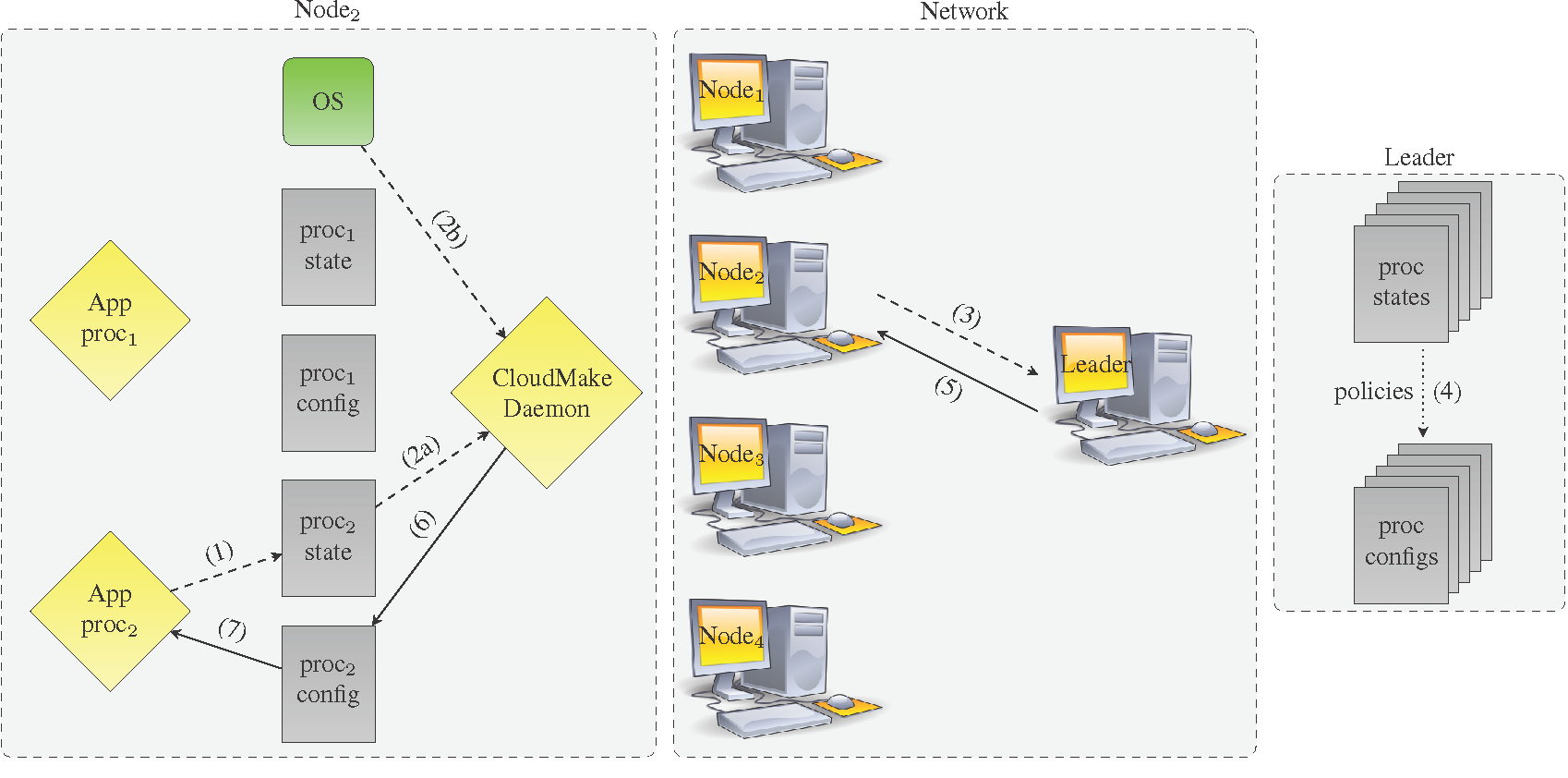

Details: CloudMake

A key task in operating a power grid analysis platform is ensure that needed applications will be up and running 24x7, even when nodes come and go, or crash, loads change, demands on the system evolve, new applications are launched or terminated, etc. In GridCloud we use a system called CloudMake for this purpose. CloudMake uses a syntax much like the Linux MakeFile syntax, but whereas the standard Linux Make system is normally used to build binaries from source files, CloudMake also is able to sense node and program state. We do this by creating XML formatted files that describe state. When those change, CloudMake will run the associated dependent rules to initiate any needed reconfigurations. As new applications are brought into GridCloud, CloudMake only needs to be instructed to manage them. It will handle the rest, such as auto-restart for system repair after failures, load balancing, mapping of the computation to the cloud computing nodes, and more. A built-in SMT constraint solver offers a simple way to tap into optimization software that will lay out components in a way that minimizes costs and, after failure, ensures the quickest possible self-repair. Despite its extensive functionality, CloudMake is very easy to use.

Details: Freeze Frame File System

The Freeze-Frame File System (FFFS) offers secure, strongly consistent real-time mirrored data sharing. The name evokes the image of a film strip, and this intuition is appropriate because FFFS offers a novel real-time snapshot capability. FFFS allows applications to explore the evolution of the power grid network state at any desired temporal resolution: in contrast to standard parallel computing tools that take a data set from some single instant in time, and apply large numbers of CPUs to carry out a computation on that single data set. Indeed, with FFFS we can also parallelize by spawning a set of tasks that access the power grid state at various points in time, permitting analysis of trends that evolved over a period of time, and we can leverage the massive on-demand parallelism of the cloud. The FFFS system also handles data replication, maintains a remote backup, and is designed to detect any tampering, so that the past state can be used as a trustworthy record of precisely how the power grid state evolved over time.

Details: GridCloud Collaboration System

The GridCloud Collaboration Tool is a tool for creating a kind of sharable virtual iPad. The basic configuration displays the current power network and can show the status of any line at a click. Various "apps" can then be dragged onto the network and this triggers actions, like a transient stability analysis or listing "similar network states seen in the past". The resulting application can then be shared with operators in the same ISO, but also with those in a neighboring ISO or distribution network. Over time, we see the collaboration tool as a powerful and extensible framework. When data is shared, the tool guarantees real-time consistency as needed.

We envision many uses for the tool because power systems operators are cautious about what they share, limiting the shared system state to data that should be continuously available to their collaborators and in a consistent real-time state. However, if a contigency arises there may be a sudden need to share data that is not normally a part of this body of normally shared state. In such cases, GridCloud's collaboration tool can be used to dynamically pull normally proprietary data into its collaborative framework for a brief period of time. The resulting virtual iPad can be shared selectively. Later when the problem is resolved, closing the application has the effect of permantly withdrawing access to the sensitive data.

Details: Vsync Cloud Computing Toolkit

One of the most difficult challenges faced by software developers who wish to create highly secure, highly available applications is that existing development environments are poorly matched to such goals. Indeed, many who use the cloud become aware of its CAP philosophy, in which consistency is sacrificed to achieve higher performance and better availability during network failures (partitioning). Yet inconsistency is dangerous when managing the power grid: it could lead to errors in which equipment damage might ensue, or costly outages.

The Vsync system is the product of a 25-year DARPA research funded effort to create the ultimate software platform for assisting in creation of fault-tolerant and secure distributed applications with strong consistency. Vsync takes the widely popular state machine replication methodology in which every replica of an application starts in a consistent initial state (obtained by loading a checkpoint that was created by some other replica that itself was in a consistent state when it made that checkpoint), then applying the same events in the same order. A majority-progress rule is used to avoid split-brain problems in the event of a network partitioning event.

Vsync isn't a magic wand: it takes the form of an open-source software library, coded in the Microsoft C# language but useful from any program that can run on a Microsoft platform, including C# but also languages such as C++/CLI, IronPython, IronRuby, standard C, F#, J#, etc (.NET supports more than 40 languages and in principles, any could leverage Vsync). Through the Mono cross-compiler, Vsync can also be used on Linux platforms. In our development work on GridCloud, we made heavy use of Vsync, which endows CloudMake with its fault-tolerance and consistency guarantees, helps FFFS carry out consistent data replication at the blinding speeds feasible with modern RDMA networking hardware, and is integrated into the collaboration tool as its preferred data transport solution when a virtual iPad is being shared and receives data updates in realtime as the network state evolves or other data is captured and rendered onto the shared display.

But many GridCloud users would be completely unaware of Vsync, because users of technologies like these three aren't exposed directly to any of the Vsync APIs. Thus a developer who creates an HPC application for the smart grid and then wishes to port it to GridCloud could certainly employ Vsync to extend their solution with replication features, but could equally well ignore Vsync completely and simply load their application into our cloud environment and then program the needed control policies into CloudMake, which would then administer the solution for them. In such cases Vsync orchestrates the 24x7 management and availability needed in the cloud-hosted solution, yet the coding style used to create it remains completely standard: that HPC application can be created "at home" using any tools the developer is familiar with.

Vsync (coupled with the previous Isis2 downloads) has been downloaded more than 5000 times from https://vsync.codeplex.com, our web distribution site. Video instructional materials and extensive documentation can be found on that site.

Vsync Library: our (hidden) secret sauce